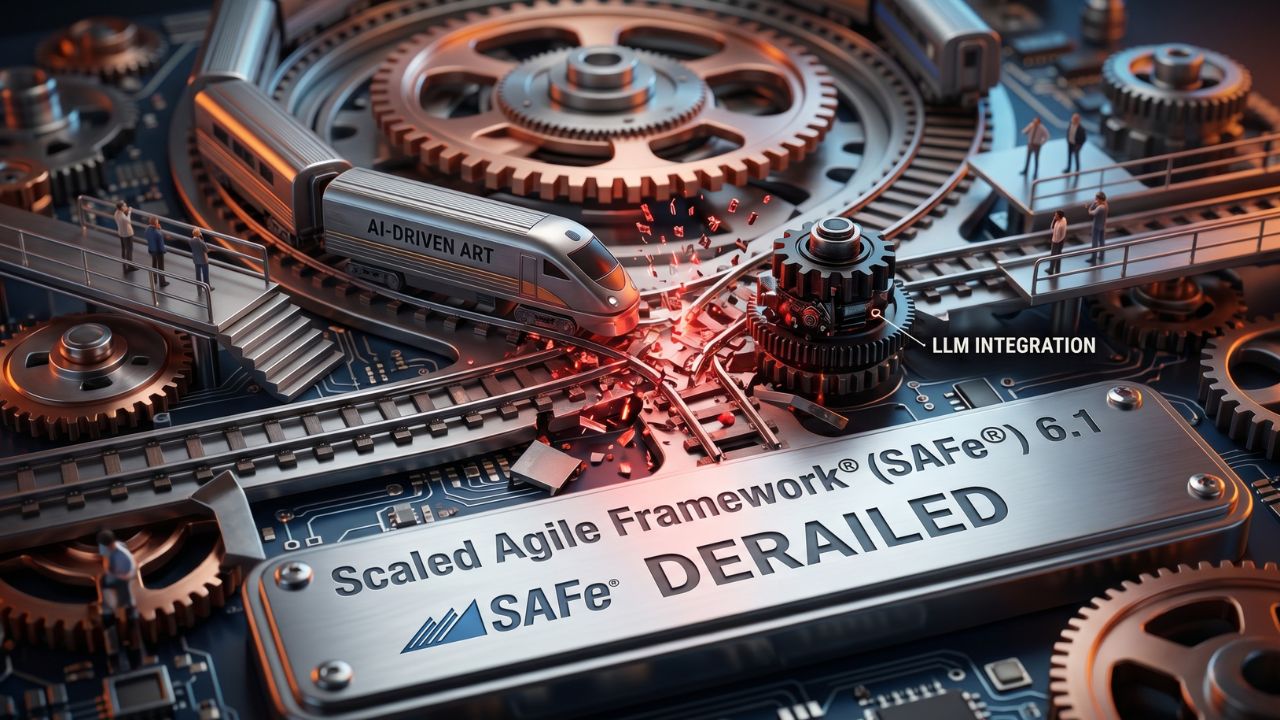

Why Your SAFe AI Integration Will Derail Production

Key Takeaways

- The Integration Trap: Plugging an LLM directly into your SAFe portfolio management tools creates unprecedented lateral vulnerabilities.

- Bounded Autonomy is Mandatory: AI agents must operate under strict zero-trust parameters, stripped of default write-access to production databases.

- Semantic Firewalls Save Portfolios: Securing an Agile Release Train (ART) requires intercepting malicious inputs before the model processes them.

- Redefining the Scrum of Scrums: Multi-agent swarms require cryptographic identity tokens to authenticate with one another during PI planning.

Integrating LLMs into your SAFe portfolio without strict access controls is a direct path to a catastrophic data breach.

Read the controversial truth about securing your Agile Release Trains against rogue AI.

Attempting safe agile framework AI integration 2026 without bounded limits will derail your portfolio.

To prevent automated data exfiltration and spiraling tech debt, leadership must rethink system permissions from the ground up.

If you are currently focused on scaling agentic AI across enterprise agile, you are likely operating with a massive compliance blind spot.

Anchoring your release trains with robust enterprise AI governance frameworks is no longer optional.

Below is the definitive deep-dive into why standard Agile tooling falls short, and the exact architectural guardrails required to protect your production environment from autonomous models.

The Hidden Dangers of Generative AI in SAFe

Most enterprise PMOs are rushing to adopt scaled agile framework generative AI without understanding the underlying threat model.

They routinely grant autonomous agents access to Jira, Confluence, and internal code repositories to speed up sprint planning.

This is a critical architectural error. When you connect an LLM to your enterprise data fabric, you are giving a probabilistic engine the keys to your proprietary source code.

Standard role-based access control (RBAC) relies on the assumption that the authenticated user will behave predictably.

AI agents do not consult corporate policies; they simply execute the next probabilistically likely token.

If an agent hallucinates a destructive action or ingests a poisoned payload, it can overwrite epics, delete repository branches, or leak sensitive architectural plans in a matter of seconds.

Enterprise Agile AI Security Protocols

Mitigating safe agile portfolio ai risks requires transitioning from passive monitoring to active, deterministic defense layers.

You cannot manage AI prompt injection risks in SAFe by simply writing "do not share secrets" in the system prompt.

Instead, you must physically separate your AI workflows into isolated network segments.

An agent tasked with backlog refinement should exist in a completely different sandbox than an agent generating automated test scripts.

Furthermore, every inter-agent interaction must be validated. If your outward-facing research agent pulls data from the web and passes it to your internal execution agent, the risk of lateral infection skyrockets.

To break this chain, you must implement strict middleware. This is the only reliable method for preventing autonomous agent prompt injection before a malicious command can execute laterally across your Agile Release Train.

Safeguarding the Agile Release Train (ART)

Enterprise agile AI security demands that no AI agent acts independently without a mandatory human-in-the-loop approval gate.

When configuring your environment for safe agile framework ai integration 2026, mandate that all agents function exclusively in read-only modes by default.

If an agent proposes a change to a Portfolio Epic or suggests a code merge, that action must be queued for explicit approval by a human Product Owner or Release Train Engineer.

By enforcing bounded autonomy and zero-trust data sanitization, you can leverage the immense computational speed of AI without surrendering your production stability.

Conclusion: Securing the Future of SAFe

Do not let the pressure to innovate compromise your portfolio's security posture.

Integrating generative AI into your SAFe environment requires a hard-coded, zero-trust architecture.

Protect your Agile Release Trains by implementing bounded autonomy, deploying semantic firewalls, and ensuring every agentic action is cryptographically verified.

Audit your existing enterprise AI tools today before a rogue agent permanently derails your production environment.

Frequently Asked Questions (FAQ)

While SAFe 6.1 acknowledges the rising importance of emerging technologies, it does not provide native, hard-coded technical guardrails for generative AI. Enterprises must build their own zero-trust architectures and semantic firewalls to safely deploy LLMs within the framework.

AI can automate capacity planning, synthesize complex dependencies, and draft initial feature descriptions. However, all AI-generated PI plans must be treated as drafts and require rigorous human-in-the-loop validation by Product Management and Release Train Engineers before execution.

The biggest failures occur when PMOs grant AI agents unmonitored write-access to portfolio management tools. This leads to automated data exfiltration, corrupted backlogs, and lateral prompt injections that compromise multiple interconnected Agile teams simultaneously.

You must route all external inputs and inter-agent communications through a semantic firewall. This dedicated parsing layer scans for and strips out adversarial commands before the data ever reaches the core context window of your execution agents.

AI governance is a shared responsibility, but ultimate accountability lies with Lean Portfolio Management (LPM) and the Enterprise Architecture team. They must jointly define the zero-trust boundaries and cryptographic identity protocols for all deployed autonomous agents.

AI agents can act as highly efficient data synthesizers during a Scrum of Scrums, providing real-time dependency tracking and risk analysis. However, they must never be granted the authority to independently alter release schedules or reallocate team resources.

Feed the AI your raw market research, strategic themes, and historical data within a secure, isolated sandbox. Command the LLM to format the output into the standard Epic hypothesis statement, but ensure a human Epic Owner authorizes the final draft.

An AI Scrum Master serves strictly as a programmatic assistant. It automates metric tracking, flags sprint bottlenecks, and schedules ceremonies. It cannot replace the human empathy, conflict resolution, and nuanced coaching required to build high-performing Agile teams.

Implement a default-deny, read-only architecture. Never allow public foundational models to train on your enterprise data. Utilize isolated virtual private clouds (VPCs) and ensure dynamic session tokens are required for an agent to access any sensitive repository.

Beyond standard SaaS licensing, PMOs face massive hidden costs in LLM token consumption. If an autonomous agent enters an infinite execution loop due to poor governance, it can rack up catastrophic cloud computing bills in a matter of hours.