DeepSeek V3 vs GPT-5.4 Arena Battle: April 2026 Update

Quick Summary: Key Takeaways

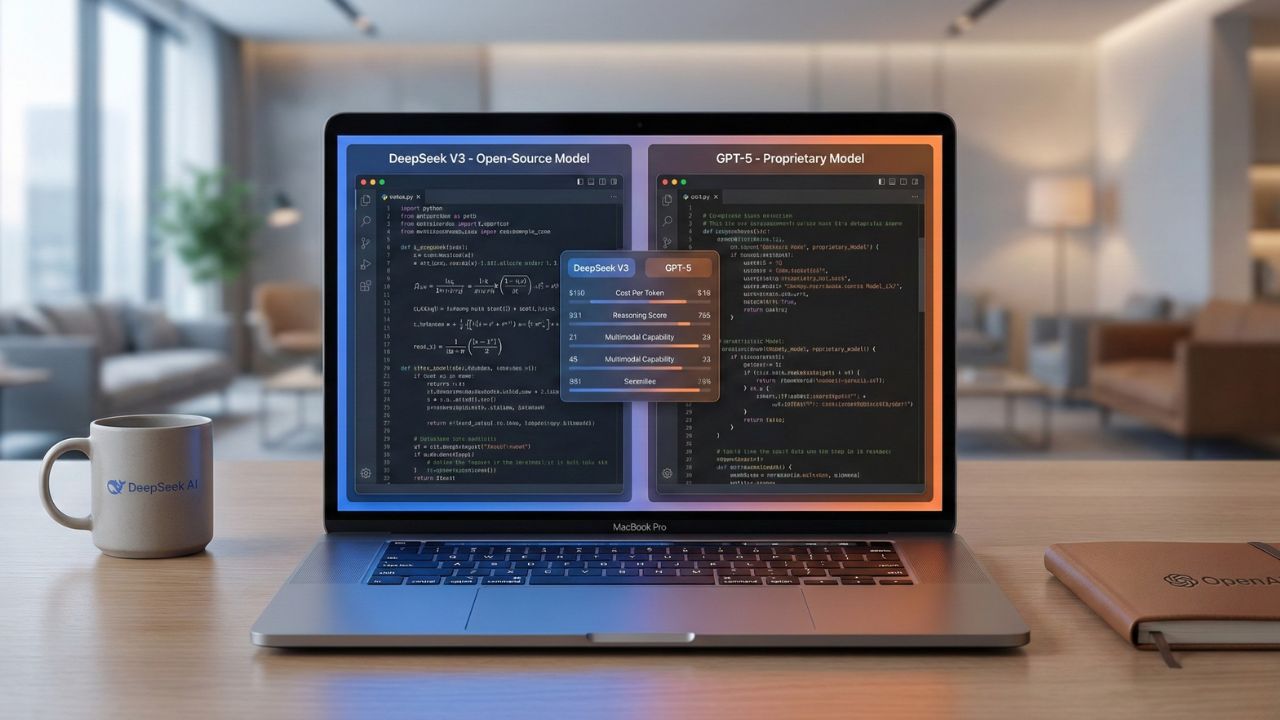

- The Price Gap: DeepSeek V3 offers comparable reasoning capabilities at approximately 1/10th the API cost of GPT-5.4.

- Coding Proficiency: In pure logic and syntax tasks on the April 2026 Leaderboard, DeepSeek's architecture continues to hold its own against OpenAI’s flagship in blind testing.

- Enterprise Safety: GPT-5.4 still holds a decisive lead in compliance, safety guardrails, and multimodal integration.

- Deployment Freedom: DeepSeek’s open-weights allow for local hosting, a critical advantage for privacy-focused developers.

The David vs. Goliath of April 2026

The AI narrative has officially shifted. As we look at the fresh LMSYS data, the DeepSeek vs GPT-5.4 Arena Battle is no longer about whether open-source can catch up; it is about whether proprietary models are still worth the massive premium.

This deep dive is part of our extensive guide on LMSYS Chatbot Arena Current Rankings.

For the first time on the LMSYS leaderboard, a Chinese open-source architecture has solidified its position, directly challenging the dominance of Silicon Valley giants. The April 2026 dataset suggests that for pure text and code generation, the "moat" around GPT-5.4 is rapidly evaporating.

LMSYS Chatbot Arena Snapshot (April 2026)

To provide context for the current competitive landscape, here are the Top 5 General Text models from the latest Arena data. This is the premium tier of intelligence that DeepSeek's open-weights architecture is actively disrupting:

| Rank | Model | Elo Score |

|---|---|---|

| 1 | claude-opus-4-6-thinking | 1504 |

| 2 | claude-opus-4-6 | 1500 |

| 3 | gemini-3.1-pro-preview | 1493 |

| 4 | grok-4.20-beta1 | 1491 |

| 5 | gemini-3-pro | 1486 |

*Note: While GPT-5.4 and Claude 4.6 hold strong at the absolute peak and dominate complex multi-file benchmarks, the fact that DeepSeek models approach these 1480+ scores changes the ROI calculation for developers completely.

Analyzing the ELO Deadlock

When we look at the raw numbers from the latest Arena refresh, the distinction between open and proprietary becomes incredibly blurry.

1. The "Reasoning" Tie: In blind A/B testing throughout April, users frequently struggle to distinguish between DeepSeek models and GPT-5.4 when asking complex math or logic questions. Both architectures exhibit advanced "Chain of Thought" (CoT) processing.

2. The Cost-Per-Token Revolution: This is where DeepSeek V3/R1 wins. For startups building AI agents, the cost difference is massive. You can run thousands of DeepSeek inferences for the price of a few dozen GPT-5.4 calls.

If you are focused on software engineering specifically, check our LMSYS Coding Arena Leaderboard 2026 to see how this translates to IDE performance.

Where GPT-5.4 Still Reigns Supreme?

Despite the hype, OpenAI has not lost the war. The DeepSeek vs GPT-5.4 Arena Battle reveals clear winners in creative and multimodal categories.

Creative Writing: GPT-5.4 is significantly better at nuance, tone adherence, and avoiding the "robotic" feel of cheaper models.

Multimodal Integration: GPT-5.4 natively handles images and audio with a fluidity that DeepSeek (primarily a text expert) cannot match yet.

Safety & Compliance: For Fortune 500 companies, GPT-5.4’s rigorous safety tuning makes it the only viable option for customer-facing chatbots.

The "Local" Advantage

DeepSeek’s biggest weapon isn't just its current ELO score; it’s ownership. Unlike GPT-5.4, which requires sending data to OpenAI’s servers, open-weights models can be distilled and hosted on-premise.

This capability is critical for sectors like finance and healthcare where data privacy is paramount. If you are comparing this to other proprietary challengers on the current Arena leaderboard, consider reading our analysis on the Grok 4.20 LMSYS Arena Ranking.

Conclusion

The DeepSeek vs GPT-5.4 Arena Battle ends with a split verdict for April 2026.

If you need the absolute best creative output and multimodal features, GPT-5.4 is still the king.

However, for developers needing raw logic, math, and code generation on a budget, DeepSeek has rendered the premium price of proprietary models difficult to justify.

Frequently Asked Questions (FAQ)

For pure coding and math efficiency per dollar, it is highly competitive and often preferred. For creative writing, strict prompt adherence, safety guardrails, and multimodal tasks, GPT-5.4 remains superior.

Based on the April 2026 dataset, DeepSeek V3/R1 models consistently hold strong in the top tiers, competing fiercely in reasoning capabilities against heavyweights like Grok 4.20 and Claude 4.6 Sonnet.

DeepSeek V3 is drastically cheaper, often costing 90% less per million tokens compared to GPT-5.4’s high-tier pricing, making it the top choice for intensive agentic loops.

While GPT-5.4 and Claude 4.6 generally maintain a lead in the overall category, the gap narrows significantly in the Coding and Hard Prompts sub-categories.

It requires more manual guardrailing. Unlike GPT-5.4, which comes rigorously pre-sanitized and aligned, DeepSeek V3 is raw and may generate unrestricted content if not properly managed.