LMSYS Coding Arena Leaderboard April 2026: The Best AI for Software Engineers

Quick Summary: Key Takeaways

- Chat ELO ≠ Coding ELO: A model can be top-tier in general conversation but fail at complex architecture logic. Always check the specific Coding category.

- The Claude Sweep: As of April 6, 2026, the Claude 4.6 family (Opus Thinking, Opus, and Sonnet) occupies the entire top 3 spots for coding.

- Apex Performance: Claude Opus 4.6 Thinking leads with a record 1546 Elo score, setting a new benchmark for multi-file refactoring and one-shot syntax accuracy.

- The Competition: GPT-5.4 High and Gemini 3.1 Pro Preview remain elite contenders, but currently trail the Anthropic stack in technical software engineering tasks.

- Hallucination Risk: High general rankings often hide poor library imports. The "Hard" coding prompts reveal which models maintain strict syntax adherence.

Why General Rankings Fail Developers?

If you are choosing your coding assistant based on the general leaderboard, you are likely losing productivity. The LMSYS Coding Arena Leaderboard April 2026 tells a completely different story than the main chat rankings.

This deep dive is part of our extensive guide on LMSYS Chatbot Arena Current Rankings.

While models like Gemini 3.1 Pro excel in massive document retrieval, software engineering requires "zero-shot" logic and strict syntax adherence—areas where Anthropic's Claude 4.6 family has currently established a decisive lead.

The Current Top Tier for Code (April 2026)

The April 2026 coding hierarchy has consolidated around reasoning efficiency and test-time compute.

1. Claude Opus 4.6 (Thinking & Standard): The undisputed king for developers. It offers record-breaking performance in C++, Rust, and Python. Its "Thinking" variant uses internal verification to catch syntax errors before outputting, making it the favorite for complex enterprise architecture.

2. Claude Sonnet 4.6: The speed champion. It maintains a 1521 Elo, offering near-Opus performance at a significantly higher throughput, ideal for rapid prototyping.

3. GPT-5.4 High: OpenAI's latest entry remains a top-tier generalist with a 1457 coding Elo. While slightly behind the Claude 4.6 family in pure logic, its ecosystem integration remains unmatched.

Coding Arena vs. Chat Arena: The Critical Difference

In the standard arena, users vote on "vibes" and formatting. In the coding arena, the vote is increasingly binary: Did the code work on the first try, and was the logic sound?

- General Leaderboard: Rewards tone, length, and conversational flow.

- Coding Leaderboard: Rewards brevity, functional correctness, and the absence of hallucinated dependencies.

For example, while Grok 4.20 Beta1 is surging in general popularity due to its real-time data access, the coding rankings show that Anthropic’s ruthless focus on architectural planning is what developers actually prefer. You can read more on the general trends in our Grok 4.20 LMSYS Arena Ranking update.

Accuracy & Hallucinations in April 2026

The most dangerous metric for a developer is the Hallucination Rate. On the LMSYS Coding Arena Leaderboard April 2026, we see a clear separation between models that "guess" and models that "reason."

Top-tier models like Claude Opus 4.6 Thinking (1546 Elo) will "refuse" a task or correct themselves if a library doesn't exist, whereas mid-tier generalists will often invent parameters. This difference is critical when managing large-scale migrations or refactoring legacy codebases.

Conclusion

Don't let general marketing hype dictate your engineering stack. The LMSYS Coding Arena Leaderboard April 2026 confirms that specialized reasoning models are pulling away from general-purpose assistants.

For pure coding efficiency, focus on the tools that have mastered technical logic: the Claude 4.6 family and the latest high-reasoning versions of GPT and Gemini.

Frequently Asked Questions (FAQ)

As of April 2026, the Claude 4.6 family occupies the top tier for reliability and syntax accuracy, with Claude Opus 4.6 Thinking leading the specialized coding rankings.

Coding ELO scores are separate from General ELO. Top coding models in April 2026 have pushed the ceiling above 1540 Elo in the specific "Category: Coding" tab.

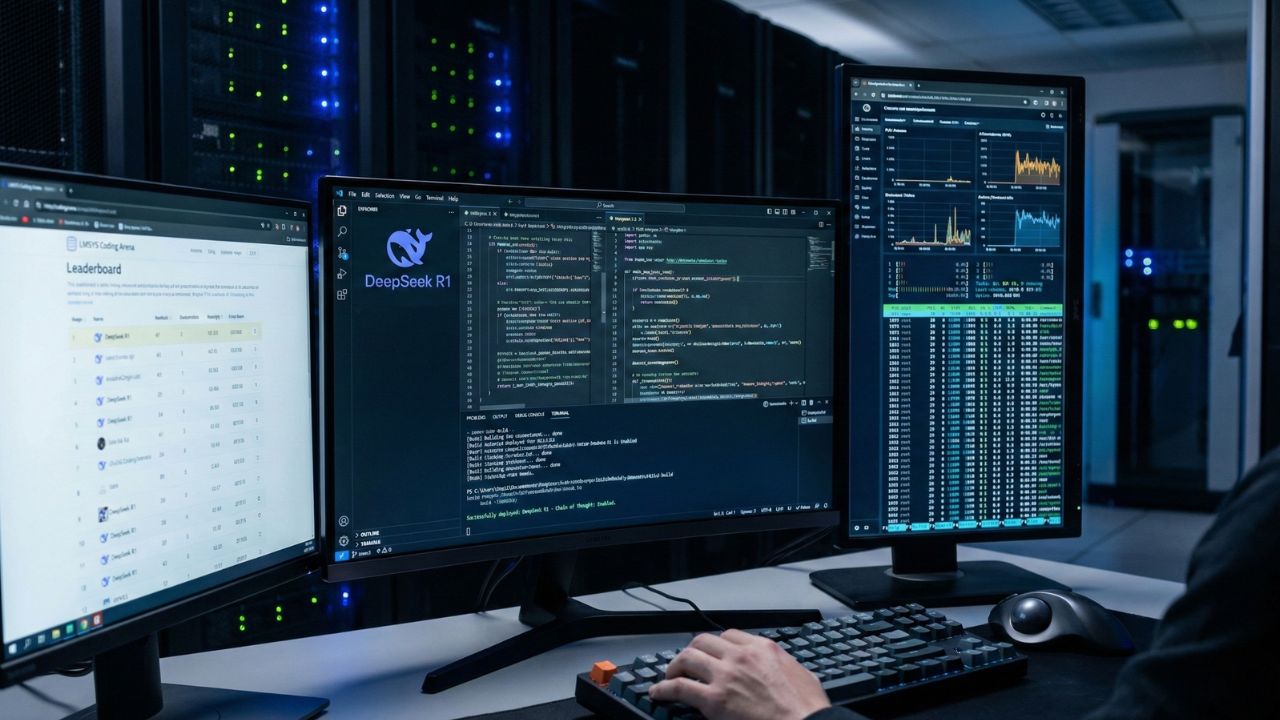

For pure script generation and local deployment, DeepSeek R1 remains a strong choice. However, for large-scale refactoring and multi-file architecture, Claude 4.6 Opus Thinking currently holds a significant lead.

The Coding Arena uses strict technical prompts (e.g., system design, debugging complex syntax) and users vote based on functional accuracy and one-shot success, not conversational tone.

Yes, because they are blind and crowdsourced. Users do not know which model generated the code until after they vote, which eliminates brand bias and reflects real-world engineering utility.