Why the OpenAI-Astral Deal is a Trap

The industry is cheering OpenAI’s acquisition of Astral as a massive win for open-source developer productivity, but CTOs need to look closer at the math. This buyout is a masterclass in enterprise vendor lock-in.

By monopolizing the foundational tools that make Python fast and wiring them natively into Codex, OpenAI is positioning its ecosystem as the mandatory operating system for AI agents. As autonomous multi-agent loops scale up, this seemingly helpful integration will quietly trap enterprises in a cycle of exploding API token costs and infrastructure dependency.

Quick Facts

- The Astral buyout brings the creators of uv, Ruff, and ty into OpenAI to power Codex.

- Agentic workflow looping will now rely entirely on OpenAI's proprietary AI ecosystem to run auto-formatting and error correction.

- Enterprise API budgets face a severe threat as continuous self-healing code cycles consume massive amounts of tokens.

- Open-source alternatives remain available, but the seamless integration with Codex creates an incredibly sticky vendor lock-in.

The integration of Astral’s Rust-based utilities into OpenAI gives the AI giant native control over the most important tools in modern software engineering.

Astral’s uv resolves packages up to 100 times faster than pip, while Ruff handles linting in milliseconds.

By absorbing these tools, OpenAI transforms Codex from a passive auto-complete feature into an active, self-correcting operating system for AI software development.

CTOs and technical leads are currently celebrating the death of manual Python debugging.

The reality is far more dangerous for long-term IT budgets.

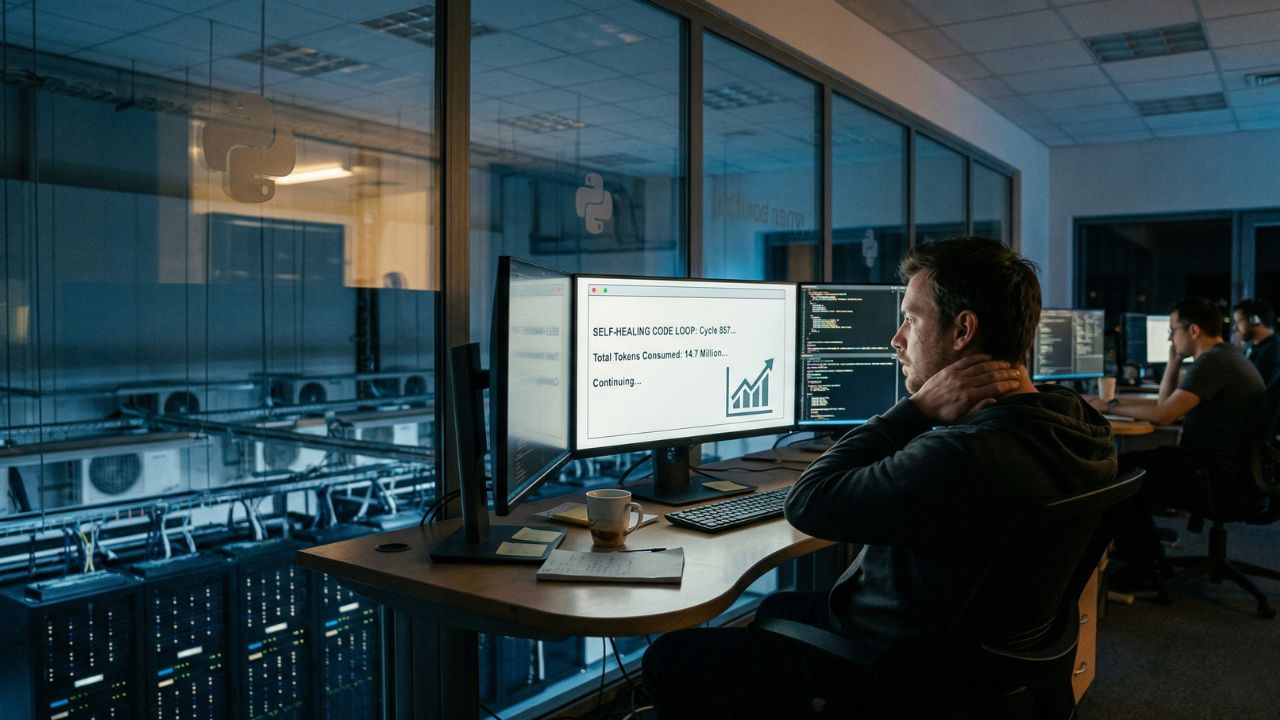

When an AI agent can write code, pull dependencies via uv, and fix its own syntax errors using Ruff, it creates a continuous, autonomous execution loop.

The Token Consumption Engine

Every time an autonomous agent tests a script, fails, and re-prompts itself to fix a linting error, it burns API tokens.

OpenAI is essentially taking the friction out of agentic Python coding workflows.

This means companies will scale their token usage exponentially without realizing it until the invoice arrives.

You are trading manual human labor for automated compute consumption.

"Developer lock-in through infrastructure control is the oldest play in tech. By owning the fastest Python tools on the market, OpenAI ensures that any enterprise running agentic AI will eventually have to do it on their platform to remain competitive."

While the mass automation of offshore developer tasks seems like a massive cost-saving measure upfront, it funnels all that saved capital directly into OpenAI's recurring revenue stream.

Technology leaders need to build a resilient Enterprise AI Strategy Framework 2026 to prevent this exact scenario.

Relying entirely on a single vendor for both the intelligence (Codex) and the execution infrastructure (Astral) destroys negotiating leverage.

Why It Matters?

The Astral acquisition is a brilliant moat-building strategy by OpenAI.

By controlling the foundational infrastructure that makes Python work at scale, they are trapping enterprise teams in their ecosystem.

Competitors like Anthropic will now struggle to offer the same level of frictionless, zero-configuration autonomous coding.

Companies must immediately audit their AI agent workflows and enforce strict token spending limits before they become permanently locked into OpenAI's infrastructure.

Frequently Asked Questions

1. Why is OpenAI acquiring open-source Python tools like Astral?

OpenAI acquired Astral to integrate hyper-fast Python tools like uv and Ruff directly into Codex. This allows their AI agents to autonomously manage dependencies and fix syntax errors without human intervention, creating a smoother but highly controlled developer ecosystem.

2. What are the enterprise API cost implications of autonomous Codex agents?

Autonomous agents work in continuous loops, writing code, testing it, and fixing errors. Each step in this self-healing process consumes API tokens. As agents run these loops constantly, enterprise API costs can explode exponentially compared to standard prompt-response models.

3. Will OpenAI charge for Astral's uv and Ruff tools in the future?

While OpenAI and Astral leadership have committed to keeping uv, Ruff, and ty open-source and permissively licensed, the deeper, seamless integration of these tools into the Codex AI agent platform will likely be monetized through API usage or enterprise subscriptions.

4. How does the Astral acquisition create vendor lock-in for Python pipelines?

By owning the most popular dependency management and linting tools, OpenAI can optimize Codex to work flawlessly with them. This creates a highly efficient workflow that is incredibly difficult for engineering teams to leave, trapping them in the OpenAI ecosystem.

5. What is the ROI of switching to OpenAI Codex for enterprise software development?

The immediate ROI is high due to the elimination of manual environment setup and boilerplate coding tasks. However, long-term ROI heavily depends on how well an organization can manage the hidden API costs of continuous agentic operations.

6. How to calculate total cost of ownership (TCO) for AI agent swarms?

TCO must include not just developer seat licenses, but the raw API token consumption required for agents to iteratively test, debug, and format code autonomously over thousands of daily automated cycles.

7. Are there open-source alternatives to OpenAI Codex for Python development?

Yes. Since Astral’s tools remain open-source, developers can use alternative open-weight models like Llama 3 or Qwen alongside independent agent frameworks, though these setups may lack the instant, out-of-the-box integration OpenAI is building.

8. How will the Astral deal affect enterprise AI governance and security?

It centralizes the software supply chain. Relying on a single vendor for both code generation and package management requires strict oversight to ensure proprietary data and internal codebases are not compromised or used for unauthorized model training.

9. What does the Model Context Protocol mean for Astral tools?

The Model Context Protocol allows AI models to directly interface with data sources and local environments. Astral's tools, functioning within this protocol, give Codex the exact context it needs to resolve dependencies instantly without user prompting.

10. How can CTOs prevent API token bloat when using autonomous AI coders?

CTOs must implement hard token limits, shift less complex tasks to smaller or cheaper models, and use outcome-based analytics to ensure autonomous agents are actually shipping functional features rather than just looping endlessly over minor syntax errors.