The Hidden Latency Trap in OpenAI’s Safeguard

OpenAI just handed developers a massive open-source victory for teen safety, but engineering teams are already hitting a catastrophic wall.

Injecting a 20-billion parameter reasoning model into your primary request loop is triggering unmanageable application lag and forcing an industry-wide architectural reset.

Quick Facts

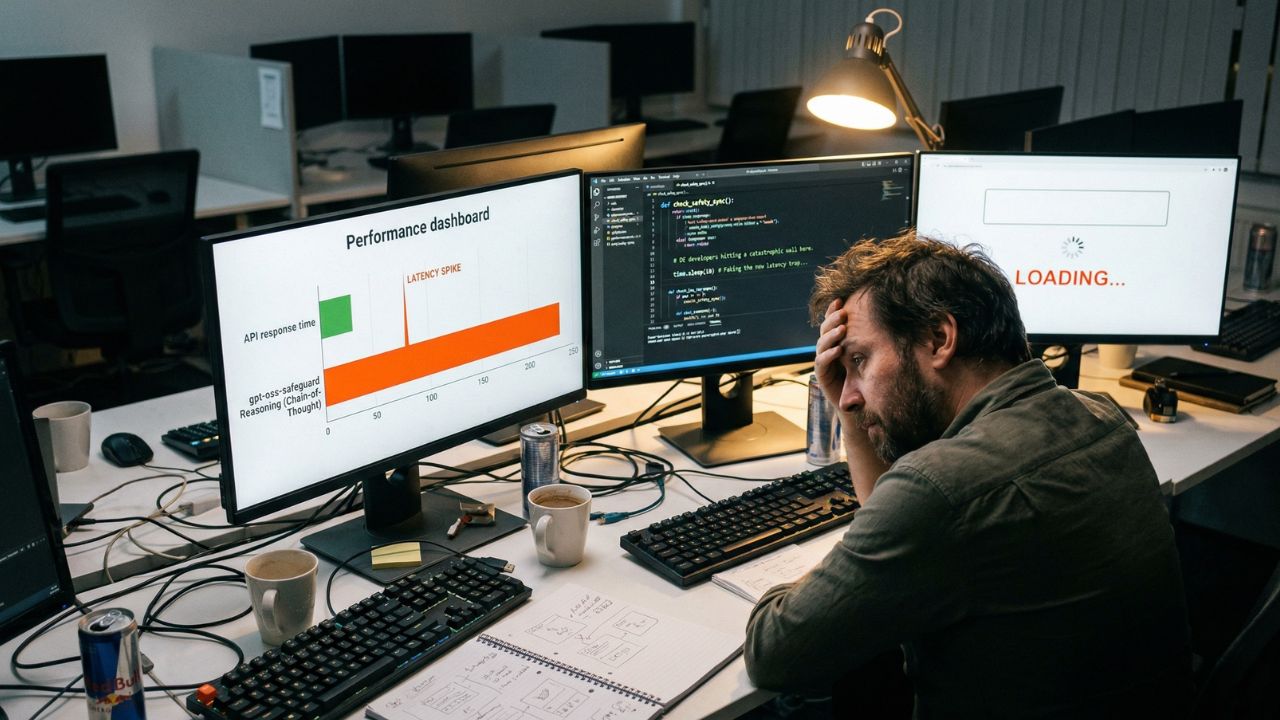

- The latency spike: OpenAI’s new gpt-oss-safeguard uses chain-of-thought reasoning, making it significantly slower than traditional classification filters.

- The architectural bloat: Running a secondary 20B or 120B open-weight model for mandatory safety checks is breaking sequential agentic pipelines.

- The required pivot: Developers must immediately shift to asynchronous moderation to prevent the safeguard from destroying their application's user experience.

The Price of Perfect Safety

The headlines are celebrating OpenAI's push to standardize teen safety across the ecosystem.

By open-sourcing prompt-based policies to run on their gpt-oss-safeguard model, they are attempting to lock down vulnerabilities following severe legal pressure over user deaths.

But software engineers are discovering a brutal reality underneath the PR victory.

The new safeguard is not a lightweight regex filter or a basic API endpoint.

It is a full-scale reasoning engine that evaluates complex safety policies in real time.

When developers bolt this model directly onto their application's main thread, the lag becomes instantly noticeable.

Waiting for a massive 20B or 120B model to complete a chain-of-thought analysis before generating a user response completely fractures the flow of real-time interaction.

Breaking the Sequential Loop

The standard method of waiting for safety approval before passing a prompt to the main generation model is officially dead.

Teams trying to brute-force this sequential method are watching their circuit breakers trip and their user retention plummet.

"gpt-oss-safeguard can be time and compute-intensive, which makes it challenging to scale across all platform content." — OpenAI Technical Report

The only viable path forward involves an asynchronous architectural pivot.

Engineering leaders are now forced to decouple their safety checks, letting the primary application run while the moderation model evaluates the context in the background.

This exact transition is making secure software development with generative AI incredibly difficult for startups without dedicated infrastructure teams.

You have to cache aggressively. You must deploy semantic routing.

The Ripple Effect on Infrastructure

If you ignore the lag, you lose your users. If you try to optimize it manually, you burn engineering hours.

We are already seeing the open source AI moderation tools impact on Indian GCCs, as offshore hubs struggle to integrate these massive models into legacy enterprise stacks.

The burden has shifted entirely onto the developer. Writing code is no longer just about feature delivery.

It is about orchestrating an incredibly heavy multi-model pipeline without letting the user see the seams.

You also have to account for the compute costs of local AI moderation models, which scale aggressively the moment you try to reduce the latency by throwing more hardware at the problem.

Why It Matters?

The era of the simple API call is over. OpenAI's safety mandate proves that future applications will require a swarm of specialized, heavy models operating in tandem.

Developers who refuse to adopt asynchronous moderation architectures will simply be priced out of the market by their own compute bills and abandoned by users frustrated by loading screens.

The winners will be the engineers who can make a 20-billion parameter background check feel invisible.

Frequently Asked Questions

1. Does the OpenAI OSS safeguard increase API response times?

Yes. The gpt-oss-safeguard model uses a chain-of-thought reasoning process rather than a simple classification matrix. This inherently takes longer than traditional fast classifiers and adds significant token-generation latency to every single request loop.

2. How to architect asynchronous AI moderation pipelines?

You must decouple the safety check from the main user-facing generation thread using message queues. This allows the primary response to generate seamlessly while the safeguard analyzes the input or output asynchronously in the background.

3. What is the token-processing delay of local moderation models?

Depending on your hardware stack and the specific reasoning effort setting, local 20B or 120B open-weight models can add anywhere from 500 milliseconds to several full seconds of processing delay per interaction.

4. How to prevent circuit breaker trips when using dual-LLM checks?

Implement strict fallback caching, hard timeouts on the moderation model, and semantic routing. This ensures the primary application does not crash or hang indefinitely if the safety layer experiences a processing bottleneck.

5. Can I run OpenAI's safeguard on edge devices?

The gpt-oss-safeguard-20b model requires roughly 16GB of VRAM. This makes it technically feasible for high-end edge devices, but the resulting latency is often far too high for practical real-world mobile applications.

6. How does prompt injection bypass standard open-source moderation?

Attackers embed hidden instructions or structural manipulation within benign-looking text. This tricks the safeguard model's reasoning layer into classifying malicious payloads as fully compliant with the provided safety policy.

7. What are the best practices for caching moderated AI responses?

Use semantic caching to store the safety verdicts of frequent or highly similar queries. This allows you to entirely bypass the heavy moderation model for identical user inputs, saving massive amounts of compute and time.

8. How to decouple safety checks from the main user interface thread?

Deploy a web worker or a background microservice that processes the safety policy via the required ROOST format. This service should return a webhook event to the UI only if a severe policy violation is actually detected.

9. Why is sequential AI generation failing in 2026?

Modern agentic pipelines require multiple models communicating simultaneously. Blocking the main execution thread to wait for a sequential safety approval destroys the real-time feedback loop that users now expect from software.

10. How to integrate Python middleware for real-time safety filtering?

Deploy a Python-based asynchronous interceptor using FastAPI. This allows you to intercept the incoming request, trigger the safeguard model, and pass the prompt to the generation model simultaneously without locking the thread.