Deploy a Remote MCP Server on Cloudflare in Under 8 Minutes (May 2026)

- Edge-Native Execution: Transition seamlessly from local stdio to global HTTP/SSE streaming connections.

- Zero-Cost Scaling: Utilize Cloudflare Workers to handle up to 100,000 daily agent requests without infrastructure debt.

- Rapid Provisioning: Master the remote mcp wrangler deploy workflow to push your code from terminal to global edge in minutes.

- Secure Enterprise Connectivity: Safely bridge edge MCP servers to private VPC databases using Cloudflare Tunnels and D1.

- Built-in Authentication: Leverage native edge middleware to intercept and validate OAuth payloads before agents hit your tools.

Local-only MCP is dead in 2026. If your agentic architecture relies on standard input/output (stdio) running on a local developer machine, you cannot scale to production.

You need to deploy a remote MCP server on Cloudflare Workers with proper OAuth boundaries, and you can achieve this in exactly 8 minutes.

The platform's free tier effortlessly handles 100K+ requests per day, making it the premier deployment vector for enterprise AI teams.

Before diving into edge computing mechanics and Wrangler configurations, ensure your foundational enterprise architecture is aligned by reviewing our complete MCP server guide 2026 (Model Context Protocol).

Why Local Stdio MCP is a Production Dead End

Running MCP over stdio is fantastic for initial debugging. However, it requires the AI client and the MCP server to share the same physical host machine.

This architecture immediately fractures when you introduce remote, cloud-hosted agents. Distributed agents require persistent, stateful connections over HTTP and Server-Sent Events (SSE).

If you attempt to retrofit legacy serverless architectures to handle SSE, you will encounter severe timeout issues.

Cloudflare Workers natively resolve this by keeping V8 isolates alive for streaming responses without punishing you with idle billing.

The Cloudflare Workers MCP Template

To hit the 8-minute deployment mark, you must avoid writing boilerplate transport logic.

Start with the official cloudflare workers mcp template. This template comes pre-configured with the correct mcp.run_http_server bindings.

It automatically maps incoming HTTP POST requests to the MCP JSON-RPC handler.

More importantly, it manages the SSE lifecycle natively. It prevents the connection from dropping when an agent takes longer than a few seconds to process a complex multi-tool reasoning step.

Executing a Remote MCP Wrangler Deploy

Wrangler is Cloudflare's CLI tool, and it is your primary deployment mechanism.

Configure your wrangler.toml file to define your server's namespace. Ensure your compatibility date is set to "2026-01-01" or later to access the newest streaming optimizations.

Run npx wrangler deploy. Within seconds, your tools, resources, and prompts are live on a globally distributed edge network, accessible via a secure *.workers.dev subdomain.

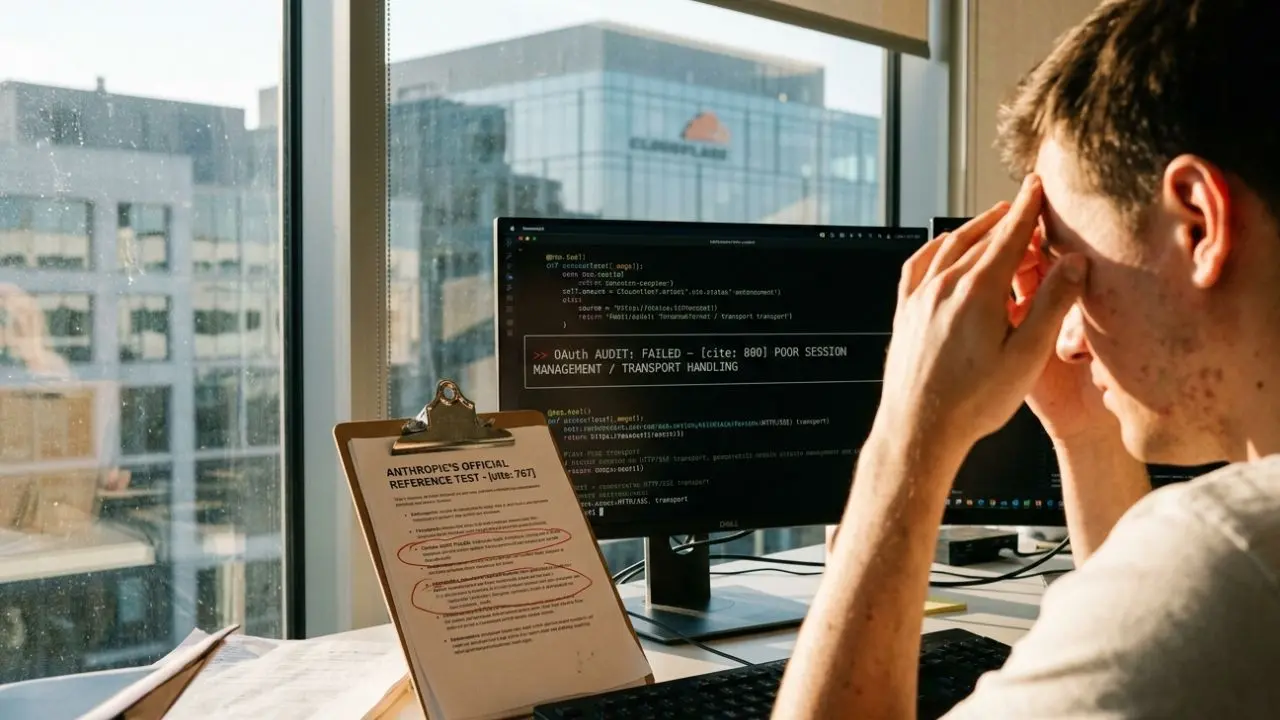

Securing Your Edge: Cloudflare MCP OAuth

Exposing an MCP server to the public internet without strict authentication is a critical vulnerability.

You must implement cloudflare mcp oauth at the edge layer. Use Cloudflare Access or custom Worker middleware to intercept every incoming connection.

Verify the agent's bearer token or mTLS certificate before the request ever reaches the MCP protocol layer.

For a deep dive into preventing token leakage, consult our comprehensive mcp server authentication oauth security guide.

Connecting to VPC Databases and D1 Storage

A stateless MCP server is rarely useful. Agents need to read and write context.

Cloudflare D1 (serverless SQL) and KV (key-value) integrate directly into the Worker environment. Pass these bindings into your MCP tool handlers.

If your agent needs to query an on-premise Postgres database, deploy a Cloudflare Tunnel. This securely routes the edge MCP request down into your private VPC without exposing public inbound ports.

MCP Server Hosting Comparison: Vercel vs Cloudflare MCP

When evaluating an mcp server hosting comparison, the primary debate is usually vercel vs cloudflare mcp.

Vercel is excellent for Next.js-heavy teams and offers robust serverless functions.

However, Cloudflare Workers generally provide lower latency for the specific HTTP/SSE streaming patterns required by the Model Context Protocol.

Furthermore, Cloudflare's aggressive pricing model—charging by CPU time rather than wall-clock time—makes it significantly more cost-effective when agents leave SSE connections open while 'thinking'.

Frequently Asked Questions (FAQ)

Local stdio MCP runs locally, communicating via standard input/output streams, restricting it to single-machine environments. A remote MCP server operates over the internet using HTTP and Server-Sent Events (SSE), enabling distributed, cloud-hosted agents to connect globally.

Cloudflare Workers execute code globally at the network edge with near-zero cold starts. Crucially, they bill based on actual CPU time rather than wall-clock time, making long-running HTTP/SSE streaming connections incredibly cost-effective for agentic workloads.

Install the Wrangler CLI, initialize the official Cloudflare MCP template repository, and configure your wrangler.toml file with your project name and compatibility date. Run npx wrangler deploy to push your code directly to the edge network.

Yes. You can use Cloudflare Tunnels (cloudflared) to securely connect your edge-hosted MCP server to private, on-premise VPC databases. This allows your remote agents to query internal enterprise data without opening inbound firewall ports.

Cloudflare's free tier allows 100,000 requests per day, which covers most startup agentic workloads. The paid Workers Paid plan costs $5/month and provides significantly higher request limits, extended CPU time, and advanced analytics for scaling enterprise operations.

In your Cloudflare dashboard, navigate to your Worker's triggers and add a Custom Domain route. Cloudflare automatically provisions and manages the SSL/TLS certificates, ensuring your MCP HTTP/SSE transport layer is fully encrypted by default.

Absolutely. Cloudflare KV is perfect for caching agent prompts and lightweight resource states, while D1 provides a fully managed serverless SQLite database, allowing your MCP tools to execute complex, stateful data operations with zero-latency edge access.

Cloudflare provides native Logpush and real-time tailing via Wrangler. You can stream these logs directly into Datadog, Axiom, or Cloudflare's native analytics dashboard to monitor JSON-RPC error rates, SSE connection drops, and individual tool execution latency.

Yes, but transport layers differ. While the core Python or TypeScript MCP SDK logic remains identical, you must adapt the HTTP/SSE entry point wrappers to suit the specific serverless constraints of AWS Lambda or Vercel Edge Functions.

Utilize Cloudflare's native Web Application Firewall (WAF) and Rate Limiting rules. Throttle requests based on the agent's IP or API key to prevent a runaway LLM loop from flooding your MCP server and exhausting your backend resources.