The OpenRouter Alternatives Cloud Providers Hide From You

Executive Snapshot: The Bottom Line

- Eliminate third-party logging vulnerabilities by deploying a self-hosted LLM gateway.

- Maintain seamless OpenAI-compatible endpoints for developers without the data exfiltration risk.

- Achieve strict GDPR Article 5 compliance through absolute data minimization and local routing.

If your enterprise AI stack relies entirely on third-party API routing, your system uptime and data privacy are fundamentally out of your control. Relying on public APIs exposes sensitive, proprietary codebase snippets to unvetted middlemen, creating massive compliance liabilities.

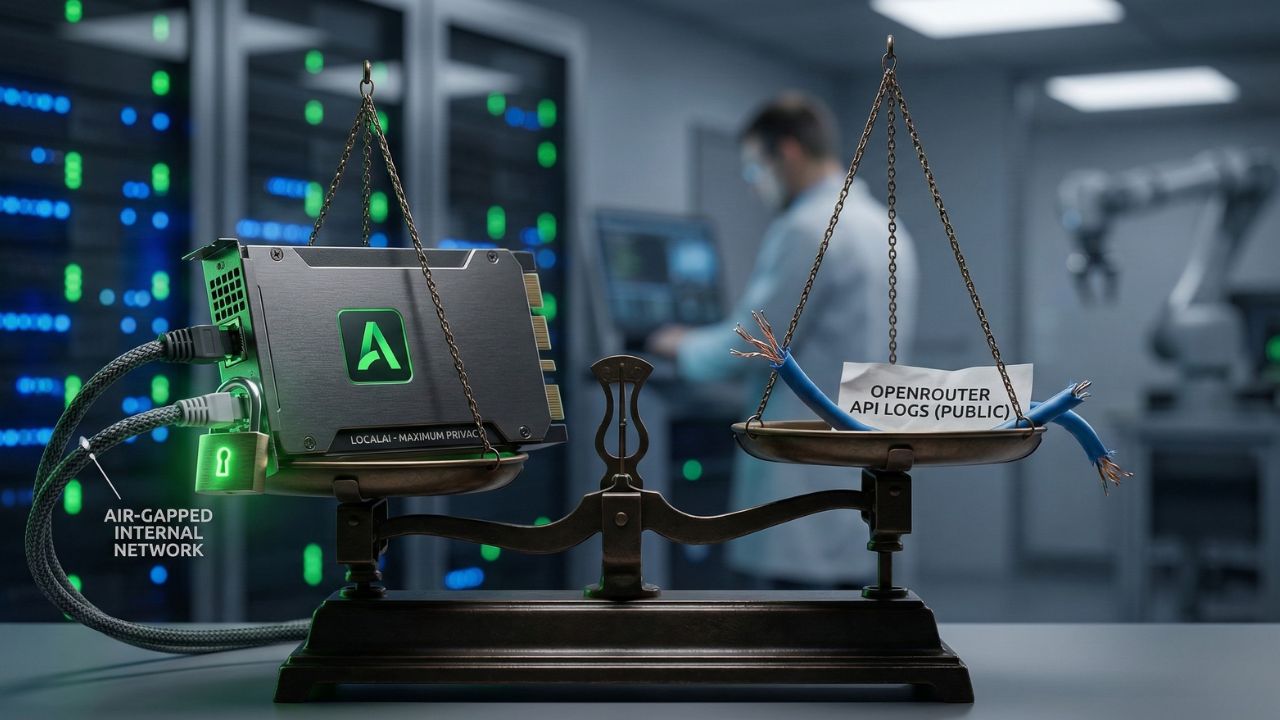

The best OpenRouter alternatives for private AI keep your data completely air-gapped while providing your engineering teams with the exact same routing capabilities, entirely on-premise.

"To understand the broader ecosystem and the foundational security concepts behind local versus cloud deployments, refer to our comprehensive guide on Openrouter vs Ollama local AI."

The Security Nightmare of Public Routers

As detailed in our master analysis on Why Your OpenRouter API Habit is a Security Nightmare, funneling proprietary logic through cloud aggregators is a critical vulnerability. You are essentially trusting a proxy service with your most valuable intellectual property.

To regain absolute control and ensure regulatory compliance, forward-thinking enterprise teams are actively ripping out public routers and replacing them with private, self-hosted AI gateways.

How Private AI Endpoints Work for Enterprise?

Deploying your own API gateway for local models ensures that all developer requests are routed internally. This architecture intercepts API calls from your internal applications and transparently redirects them to local inference engines, completely bypassing the public internet.

When you host your own AI routing layer, you gain granular, real-time control over internal rate limiting, project-based cost management, and security auditing.

Evaluating the Contenders: LiteLLM vs. LocalAI

Two platforms have emerged as the industry standard for achieving secure, scalable routing within enterprise environments:

- LiteLLM: Allows you to standardize API calls across over one hundred LLMs using the ubiquitous OpenAI format. It acts as a lightweight proxy, meaning your developers do not need to rewrite their legacy codebase to switch between different local or private cloud models.

- LocalAI: Serves as a drop-in replacement REST API that runs entirely on your own bare-metal hardware. It is specifically designed from the ground up to keep data air-gapped, making it an ideal foundational layer for strict HIPAA or GDPR compliance requirements.

| Feature Profile | LiteLLM (Self-Hosted) | LocalAI | Cloud Aggregators (e.g., OpenRouter) |

|---|---|---|---|

| Hosting Model | Self-hosted or Hybrid Cloud | 100% Self-hosted (Bare Metal) | Public Cloud Only |

| API Format | OpenAI Compatible | OpenAI Compatible | Proprietary / Mixed |

| Data Privacy | High (Controlled internally) | Maximum (Air-gapped capability) | Low (Subject to Provider logging) |

Expert Insight: Local Load Balancing

To maximize your hardware utilization ROI, configure your private router to load balance local LLMs across your entire development team.

By pooling GPU resources on a central internal server cluster, you can serve dozens of developers simultaneously without the exorbitant cost of purchasing dedicated, high-end hardware for every individual workstation.

This centralized approach is especially crucial when running multi-agent swarms without an internet connection, as these complex autonomous workflows require continuous, high-throughput model access without hitting artificial rate limits.

The Hidden Trap: API Key Management

Most engineering teams assume that simply switching the backend URL to a self-hosted AI router automatically solves all security issues. This is a dangerous misconception.

The hidden trap lies in how internal API keys are distributed, rotated, and managed across the developer lifecycle. Even on a private intranet, hardcoding API keys into your applications creates a severe vulnerability.

If an internal gateway is misconfigured or a junior developer accidentally pushes an internal key to a public GitHub repository, lateral movement within your network becomes trivial for attackers.

You must rigorously integrate your private AI router with an enterprise secrets manager (e.g., HashiCorp Vault or AWS Secrets Manager). Implementing dynamic, short-lived API keys for your internal AI networks ensures that even if a credential is leaked, its access window is virtually non-existent, directly satisfying GDPR data minimization principles.

Conclusion

Replacing public cloud aggregators with a self-hosted AI gateway is no longer optional for engineering teams prioritizing true data sovereignty and security.

By deploying the best OpenRouter alternatives for private AI—like LiteLLM or LocalAI—you eliminate the proxy liability of public endpoints, slash recurring token costs, and future-proof your enterprise compliance posture.

Take the first step today by auditing your current API usage and provisioning your internal routing infrastructure.

Frequently Asked Questions (FAQ)

LocalAI and self-hosted instances of LiteLLM are considered the most secure alternatives. They allow you to process all LLM requests directly on internal hardware, eliminating third-party logging and ensuring your proprietary data never touches the public internet during active inference.

Private AI endpoints act as secure internal proxies for your developers. When an application makes an API call, it is directed to a local server instead of a public cloud. This internal server processes the prompt using locally hosted models, returning the response securely.

Yes, LiteLLM is an excellent alternative for enterprise teams needing to standardize API calls. When self-hosted on your network, it provides the exact same routing flexibility as cloud aggregators, but allows you to point developer requests exclusively to your private, on-premise infrastructure.

Absolutely. By utilizing modern open-source tools, you can easily deploy a comprehensive API gateway directly on your internal network. This architecture allows you to manage rate limits, track internal usage metrics, and securely route prompts to local models like Llama 3 or DeepSeek R1.

The primary costs involve server hardware and electricity, as the routing software itself is typically open-source and free to use. Unlike cloud providers that constantly charge pay-per-token fees, self-hosting requires an upfront investment in GPUs, but your marginal inference costs effectively drop to zero.

Yes, the best OpenRouter alternatives for private AI are specifically designed to offer native OpenAI-compatible endpoints. This crucial feature means developers can switch from cloud APIs to local models simply by changing the base URL, completely avoiding the need to rewrite existing application code.

You should actively manage internal API keys using established enterprise secrets management platforms like HashiCorp Vault. Always avoid hardcoding keys in plain text, and configure your self-hosted AI router to strictly require short-lived, dynamically generated tokens for all internal developer requests.

OpenRouter functions as a commercial cloud aggregator that routes requests to various third-party models over the public internet. Conversely, LocalAI is an open-source, self-hosted platform that allows you to run models entirely on your own hardware, ensuring maximum data privacy and absolute data sovereignty.

You can effectively load balance local LLMs by deploying an internal proxy server, such as HAProxy or NGINX, in front of multiple model instances. This strategy distributes incoming developer requests evenly across your available GPU servers, actively preventing system bottlenecks during peak engineering hours.

For achieving strict HIPAA compliance, a fully air-gapped LocalAI deployment is highly recommended by security professionals. Because this setup requires zero outbound internet connection, it guarantees that sensitive patient health information cannot be inadvertently leaked to external cloud model providers during active reasoning tasks.