The Multimodal Streaming Architecture OpenAI is Hiding

OpenAI just tapped former JioStar digital CEO Kiran Mani as its Managing Director for the Asia-Pacific region, effective June 2026. Bringing a streaming and media titan to lead OpenAI APAC means one thing for software engineers: text-based API wrappers are officially dead.

Quick Facts

- The executive hire: Kiran Mani will relocate to Singapore to direct OpenAI's massive APAC expansion.

- The media pivot: The future of AI in the region is real-time, high-bandwidth multimodal streaming over hyper-fast networks.

- The developer mandate: Developers who are still writing JSON-parsing architectures must instantly adopt native video/audio streaming protocols to survive the new ecosystem.

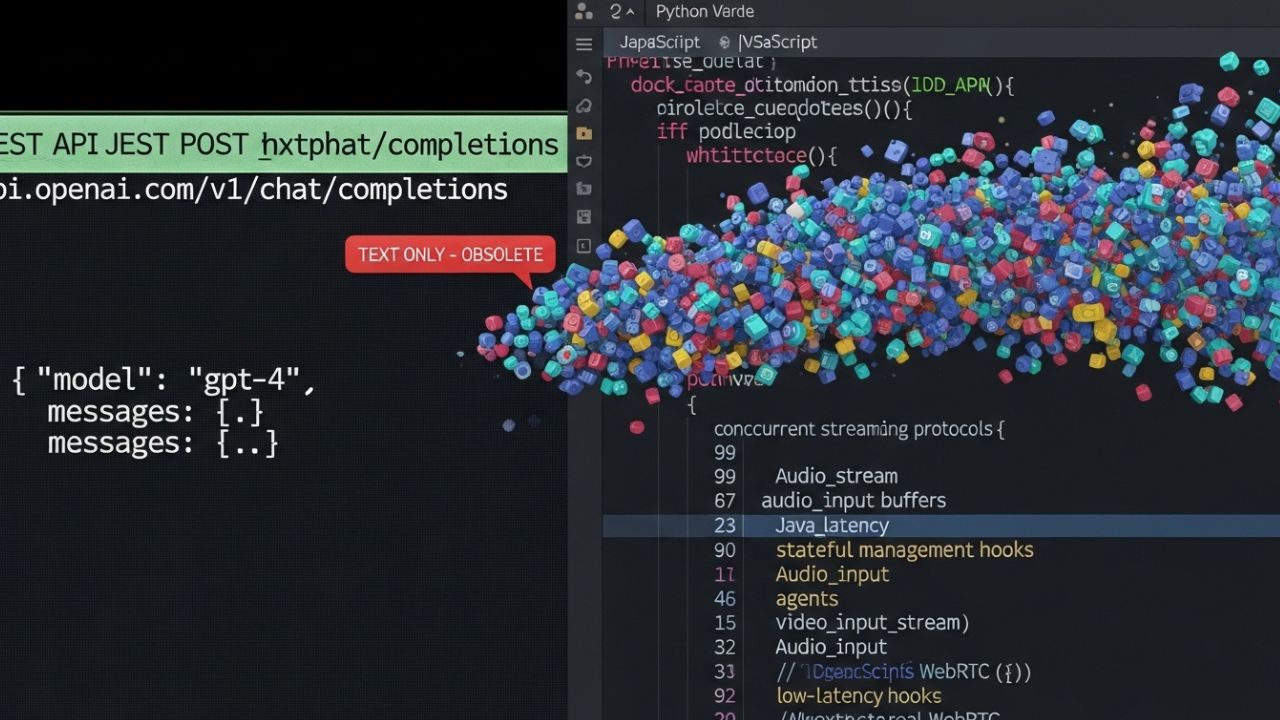

The Death of the Text Wrapper

For the last three years, engineers built entire startups around simple REST integrations.

You sent a text prompt, waited for a JSON response, and rendered it. That era is over.

Mani's appointment proves OpenAI sees the future not in chat interfaces, but in persistent, high-bandwidth audio and video.

They need executives who understand massive streaming infrastructure because that is what their next-generation models demand.

"If your entire architecture relies on parsing text responses, you are building for a web that will not exist in two years. Multimodal streaming is the only path forward."

The Shift to Native Streaming

Text is too slow for the next generation of autonomous agents.

The industry is moving aggressively toward WebSockets and WebRTC to maintain live, stateful connections between the user and the model.

Engineers must adapt instantly. Those relying entirely on standard HTTP endpoints will find their skills entirely devalued as enterprise clients demand real-time video analysis and immediate voice generation.

Why It Matters

As generative models become fully multimodal, the underlying enterprise economics will completely transform.

Companies relying on basic integrations will find themselves displaced.

Understanding the OpenAI India APAC strategy impact on GCCs is essential for managers.

Engineering teams must monitor the hidden expenses of these high-bandwidth integrations to avoid the localized API cost trap.

Developers can leverage new AI tools to bridge the gap.

For instance, Vibe Coding allows you to build complex apps using natural language , which accelerates the migration away from legacy frameworks.

Frequently Asked Questions

1. What is multimodal AI streaming architecture?

It is a network design that prioritizes continuous, real-time transmission of video and audio directly to AI models, bypassing traditional text-based request-response cycles.

2. How do I build real-time video AI applications?

Engineers need to abandon REST and adopt persistent protocols like WebSockets or WebRTC to stream frames and audio directly to the edge node.

3. Why are text-based LLM APIs becoming obsolete?

Bringing a streaming and media titan to lead OpenAI APAC means one thing for software engineers: text-based API wrappers are officially dead.

4. How does OpenAI's media strategy affect software engineers?

Developers who are still writing JSON-parsing architectures must instantly adopt native video/audio streaming protocols to survive the new ecosystem.

5. What are the best practices for low-latency AI streaming?

Minimize middleware, maintain persistent edge connections, and process chunks of multimodal data concurrently.

6. How do I handle stateful multimodal AI inputs?

Systems must maintain memory context at the session level, streaming deltas or keyframes rather than resending the entire context window.

7. What tools are needed for agentic video analysis?

You need ultra-fast frame extraction libraries, distributed edge compute platforms, and native SDKs that handle constant bi-directional feeds.

8. How to transition from REST APIs to AI streaming protocols?

Start by rewriting middleware to handle asynchronous event loops and replace standard HTTP endpoints with persistent duplex connections.

9. Are developers losing jobs to multimodal autonomous agents?

Those who only know how to build basic REST wrappers will struggle, while engineers who architect complex streaming environments will remain highly valued.

10. How to integrate live audio/video with OpenAI models?

Leverage the newest generation of multimodal SDKs that treat audio and video as native input tensors, rather than transcribing them to text first.