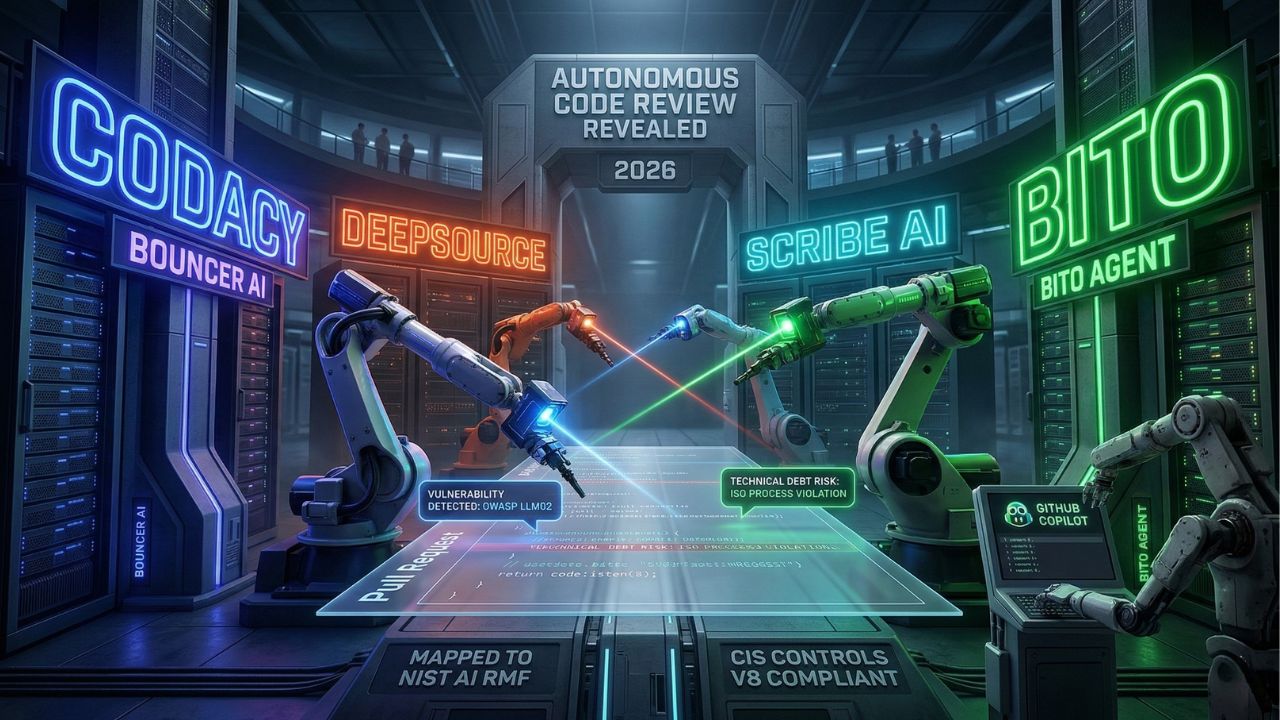

Best AI Agents for Autonomous Code Review 2026 Revealed

Bottom Line: The Autonomous Review Mandate

- The Core Issue: Human engineers simply can't keep up with AI code output.

- Compliance Necessity: Automated PR reviews must align with SOC 2 Type II (CC8.1: Change Management & Authorization Controls).

- Top 2026 Performers: Agents like CodeRabbit, Codiumate, and Ellipsis lead the market in complex logic verification.

- The Immediate Action: Integrate an autonomous reviewer directly into your CI/CD pipeline to catch vulnerabilities before the final merge.

Human reviewers are rubber-stamping AI-generated pull requests out of sheer fatigue.

Letting unverified AI output flood your CI/CD pipeline introduces critical security flaws and completely threatens SOC 2 Type II compliance.

As detailed in our master guide on Why "Vibe Coding" Is Destroying Your Codebase, you need the best AI agents for autonomous code review 2026 to actually verify what your AI wrote and maintain strict change management controls.

The Hidden Trap: What Most Teams Get Wrong About AI Code Reviews

Most engineering leaders mistakenly believe that throwing a standard large language model at a pull request diff constitutes an "AI review."

This is a massive hidden trap. Basic wrappers act purely as syntax checkers;

they lack the architectural context required to catch deep, structural degradation.

They will happily rubber-stamp hallucinated logic as long as the immediate code compiles.

A true 2026 agentic workflow understands your entire repository's dependency tree.

Relying on basic diff-scanners results in a false sense of security while compounding your technical debt exponentially.

How Autonomous AI Reviewers Detect Complex Logic Flaws?

Advanced AI review agents do not just read code; they simulate execution paths.

When testing for vulnerabilities, these specialized tools map out state changes across your microservices to identify silent dependency failures.

They prevent context window collapse by querying your repository as a vector database.

This pulls relevant interfaces into the prompt automatically, ensuring the agent sees the holistic impact of a local change.

For a deeper look at establishing these checks during active development, review our Agile framework in 4 Steps to Audit AI-Generated Code & Stop Tech Debt.

Expert Insight: Never use the exact same foundational model to write the code and review the code.

If your developers use Claude 3.5 Sonnet to generate the pull request, enforce your CI/CD pipeline to evaluate the logic using an enterprise-tuned GPT-4o model.

This algorithmic diversity prevents shared hallucination blind spots.

Top Performers: Best AI Agents for Autonomous Code Review in 2026

Evaluating the right tool requires looking past basic linting features.

You must prioritize agents capable of maintaining human authorization controls and deep repository context.

| Autonomous Agent | Core Strength in 2026 | Legacy Monolith Support | Security Compliance Focus |

|---|---|---|---|

| CodeRabbit | Context-aware, line-by-line feedback | High (Deep vector search) | SOC 2 Type II, ISO 27001 |

| Codiumate | Automated test generation & verification | Medium (Best for microservices) | OWASP Top 10 |

| Ellipsis | Pushing refactor commits directly in the PR | High (Repository-wide refactoring) | CC8.1 Change Management |

| Sweep | Turning tickets directly into reviewed PRs | Medium | Continuous Vulnerability tracking |

4 Steps to Integrate Autonomous Agents into Your CI/CD Pipeline

Implementing these tools requires a structured approach to avoid disrupting your existing developer workflow.

1. Define the Authorization Boundary: Map your pipeline to SOC 2 Type II (CC8.1) standards to ensure every automated change has an auditable trail.

2. Configure the Webhook: Connect the AI agent via a secure GitHub App or GitLab webhook, restricting access solely to necessary repositories.

3. Set the Hard Gates: Program the AI agent to block merges if cyclomatic complexity thresholds are exceeded or OWASP vulnerabilities are detected.

4. Establish the Human Override: Ensure senior engineers maintain the final say, allowing them to override false positives and document the decision.

Conclusion: Upgrade Your Review Pipeline Today

If your development team is leveraging AI generation, you need specialized autonomous code review agents to actually verify what your AI wrote.

Stop relying on fatigued human reviewers to catch hallucinated logic.

Start by auditing your current PR bottleneck and piloting one of the 2026 market leaders today.

Ready to protect your broader infrastructure from autonomous bloat? Read our complete guide on Managing technical debt in the age of Devin and Cline next.

Frequently Asked Questions (FAQ)

No. While the best AI agents for autonomous code review 2026 handle syntax, style, and security checks, human oversight remains mandatory for complex architectural alignment and maintaining SOC 2 Type II authorization controls.

In 2026, leading GitHub-integrated reviewers include CodeRabbit, Ellipsis, and Codiumate. These agents install directly as GitHub Apps, providing inline comments, automated test generation, and deep repository context to prevent unmaintainable code from merging.

Advanced agents parse your entire repository into a vector database to understand dependencies. They do not just scan the immediate PR diff; they simulate data flow to identify silent dependency failures and hallucinated logic before execution.

Early generation tools were, but premium agents use iterative self-reflection to drastically reduce noise. They verify their own findings against your specific codebase context before posting a comment, ensuring developers only see actionable feedback.

Enterprise pricing typically ranges from $20 to $50 per developer per month. Given that human reviewers are rubber-stamping pull requests out of sheer fatigue, the ROI on reclaiming engineering hours justifies the license cost instantly.

Yes. Modern autonomous reviewers can ingest your internal style guides and linting rules. They automatically request formatting changes or even push intelligent commits to fix style violations directly within the active pull request.

Integration is typically done via a GitHub or GitLab App installation. You configure the agent as a required status check in your branch protection rules, ensuring PRs cannot merge until the AI approves or a human overrides.

It depends on the agent's context window. Premium tools utilize Retrieval-Augmented Generation (RAG) to map legacy monoliths effectively, though they inherently perform much better when reviewing modular, well-documented microservice architectures.

The most secure agents offer self-hosted or zero-data-retention deployments. They must strictly comply with SOC 2 Type II (CC8.1) standards and guarantee that your proprietary business logic is never used to train external models.

Training a local agent involves deploying an open-weights model fine-tuned on your historical pull requests and securely hosted on-premise. You then connect it to your Git server using custom webhooks to evaluate diffs entirely privately.