Why "Vibe Coding" Is Destroying Your Codebase?

Executive Summary: AI Governance at a Glance

- IDE Data Privacy: Prevent proprietary logic leaks via third-party black boxes.

- Code Auditing: Enforce ISO/IEC/IEEE 12207 life cycle processes during Scrum sprints.

- Access Control: Align autonomous reviews with SOC 2 Type II (CC8.1) standards.

- Vulnerability Management: Maintain CIS Controls v8 (Control 7) to stop codebase rot.

- Automated Decisions: Comply with GDPR Article 22 when automating Agile user stories.

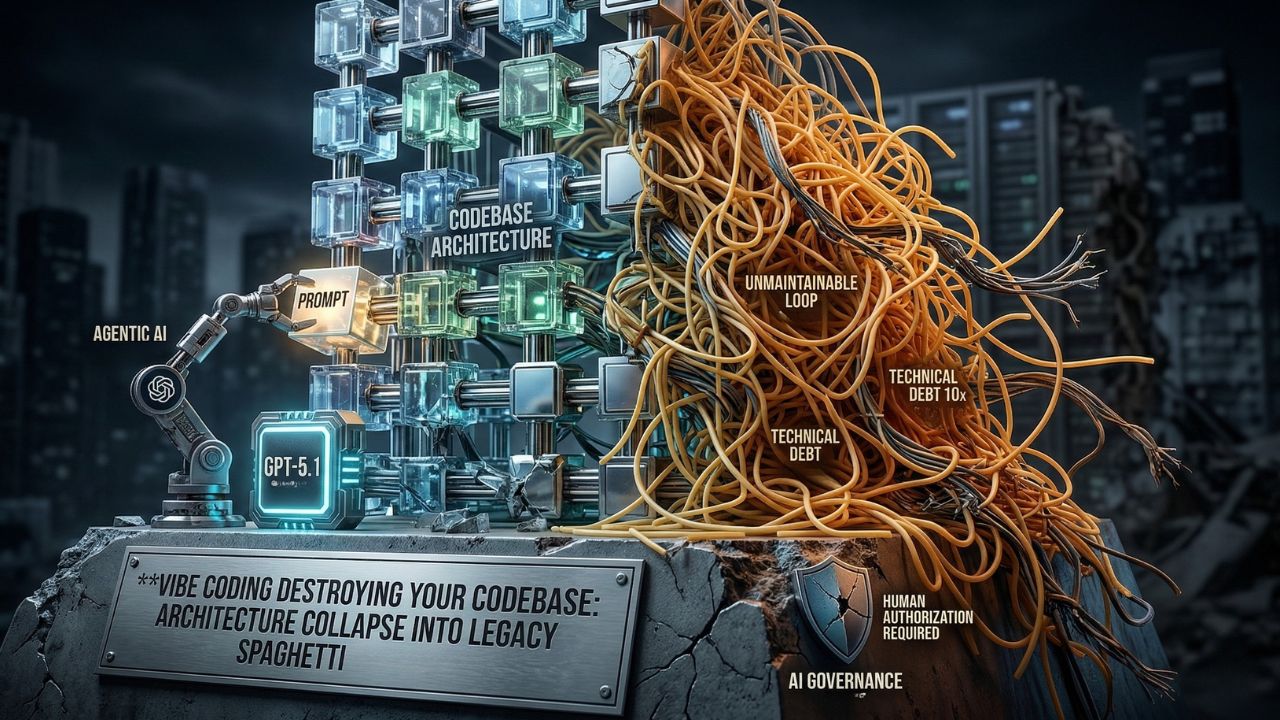

Developers operating on autopilot might feel incredibly fast, but is vibe coding killing your codebase?

Without human oversight, relying on AI to generate architecture leads directly to severe IP loss and unmanageable bloat.

As detailed in our master guide on AI Governance Rules, implementing 5 rules for AI governance will save your architecture from collapsing into legacy spaghetti code and provide the framework needed to maintain control.

Let's map these processes to the NIST AI RMF (Risk Management Framework) from NIST.gov and European GDPR standards to ensure complete compliance.

The Hidden Trap: What Most Organizations Miss About Vibe Coding

"Vibe coding" feels incredibly fast until you realize your human engineers no longer understand your own architecture.

What most enterprises miss is the compounding nature of AI-generated technical debt when operating outside a Hub & Spoke content model.

When developers use tools like Blackbox AI without strict E-E-A-T (Experience, Expertise, Authoritativeness, Trustworthiness) guidelines, they sacrifice code provenance.

Failing to maintain a rigid structural architecture, similar to a hybrid 3-column layout capped at a 1060px max-width, results in environments where side-rail dependencies silently fail.

Risk Mitigation Tip: When benchmarking GCC performance against global standards, AI-driven productivity metrics often hide severe architectural bloat.

Do not let raw output speed blind you to the degradation of your core system reliability.

How to Implement the 5 AI Governance Rules?

To prevent your agentic AI from burying you in unmaintainable technical debt, your organization must enforce strict operational boundaries across the entire software development lifecycle.

Rule 1: Secure the IDE Layer

Choosing the wrong AI IDE extension means handing over your proprietary business logic to a third-party black box.

You must secure your local environment against OWASP Top 10 for LLMs vulnerabilities, specifically LLM02: Insecure Output Handling.

Deep Dive: Read our complete breakdown on the Cline vs Continue: Best AI coding assistant extension to lock down your stack.

Rule 2: Mandate Agile Auditing

Human PR reviews are failing. Because AI generates code 10x faster, your development team is quietly generating technical debt 10x faster.

Top-performing Scrum teams must adapt their definition of done to catch hallucinated logic.

Deep Dive: Learn exactly How to audit AI-generated code in Scrum sprints before faulty logic hits production.

Rule 3: Deploy Autonomous Verification

Human reviewers are rubber-stamping AI-generated pull requests out of sheer fatigue.

To maintain SOC 2 Type II compliance, you need specialized tools to actually verify what your AI wrote.

Deep Dive: Upgrade your CI/CD pipeline with the Best AI agents for autonomous code review 2026 to catch critical security flaws.

Rule 4: Enforce Architectural Empathy

Autonomous developers like Devin are great at writing boilerplate code, but terrible at long-term architectural empathy.

This directly impacts continuous vulnerability management and degrades the overall health of the system.

Deep Dive: Mastering Managing technical debt in the age of Devin and Cline is mandatory for CTOs.

Auditor's Perspective: Using platforms like Fireflies AI to transcribe developer meetings is excellent for documentation, but applying that same unverified automation to core logic loops leads directly to unmaintainable technical debt.

Always maintain human authorization controls.

Rule 5: Protect the Agile Backlog

Firing your Scrum Master and letting GPT-5.1 write your user stories is a one-way ticket to feature factory hell.

Letting a bot dictate your backlog carries serious alignment risks and potential GDPR Article 22 violations.

Deep Dive: Discover why the AI Scrum Master: Automating user stories with GPT-5.1 trend is dangerous and how to fix your prompts here.

Frequently Asked Questions (FAQ)

Vibe coding is the practice of relying heavily on AI agents to write logic based on superficial prompts, bypassing deep architectural understanding. To understand how this impacts your local environment, review our analysis on the Cline vs Continue: Best AI coding assistant extension.

Senior developers know vibe coding is bad because it creates rapid boilerplate while sacrificing architectural empathy, resulting in legacy spaghetti code. To reverse this trend, explore our strategies for Managing technical debt in the age of Devin and Cline.

Because AI generates code rapidly, your team is quietly generating technical debt 10x faster. Agents write unmaintainable loops without holistic oversight. Learn to measure and control this in our guide on How to audit AI-generated code in Scrum sprints.

Unreviewed AI output introduces vulnerabilities tied to the OWASP Top 10 for LLMs, such as insecure output handling. To automatically mitigate these specific security threats, implement the Best AI agents for autonomous code review 2026.

Implementation requires mapping your processes to the NIST AI RMF and enforcing strict CI/CD auditing to keep codebases from collapsing. Begin by adopting our step-by-step framework on How to audit AI-generated code in Scrum sprints.

An AI coding agent similar to Devin is only safe within a strict governance framework; otherwise, you hand proprietary logic to a third-party black box. Secure your data by evaluating the Cline vs Continue: Best AI coding assistant extension.

Auditing requires continuous vulnerability management aligned with CIS Controls v8. Because human PR reviews are failing, you must upgrade your auditing pipeline by integrating the Best AI agents for autonomous code review 2026.

Autocomplete AI suggests line-by-line syntax, whereas agentic coding autonomously executes complex refactoring tasks across files, increasing the risk of unmaintainable logic. To control these advanced agents, read our insights on Managing technical debt in the age of Devin and Cline.

Context window collapse happens when agents lose track of your system architecture, allowing hallucinated logic to hit production. Prevent this by enforcing modular structures and reading our Agile checklist on How to audit AI-generated code in Scrum sprints.

Yes, unregulated vibe coding bypasses crucial change management controls, directly threatening SOC 2 Type II compliance and risking GDPR violations. Ensure your automated workflows are legally sound by reviewing the AI Scrum Master: Automating user stories with GPT-5.1.

Sources & References

- National Institute of Standards and Technology (NIST): Artificial Intelligence Risk Management Framework (AI RMF 1.0)

- European Union: General Data Protection Regulation (GDPR) Article 22: Automated individual decision-making, including profiling

- Center for Internet Security (CIS): CIS Controls v8

- The Cline vs Continue Truth No IDE Vendor Admits

- 4 Steps to Audit AI-Generated Code & Stop Tech Debt

- Best AI Agents for Autonomous Code Review 2026 Revealed

- The Devin Architecture Flaw Exploding Your Tech Debt

- Automating User Stories? Why AI Scrum Masters Fail Agile

External Sources

Internal Sources