4 Steps to Audit AI-Generated Code & Stop Tech Debt

Executive Snapshot: The Agile AI Audit

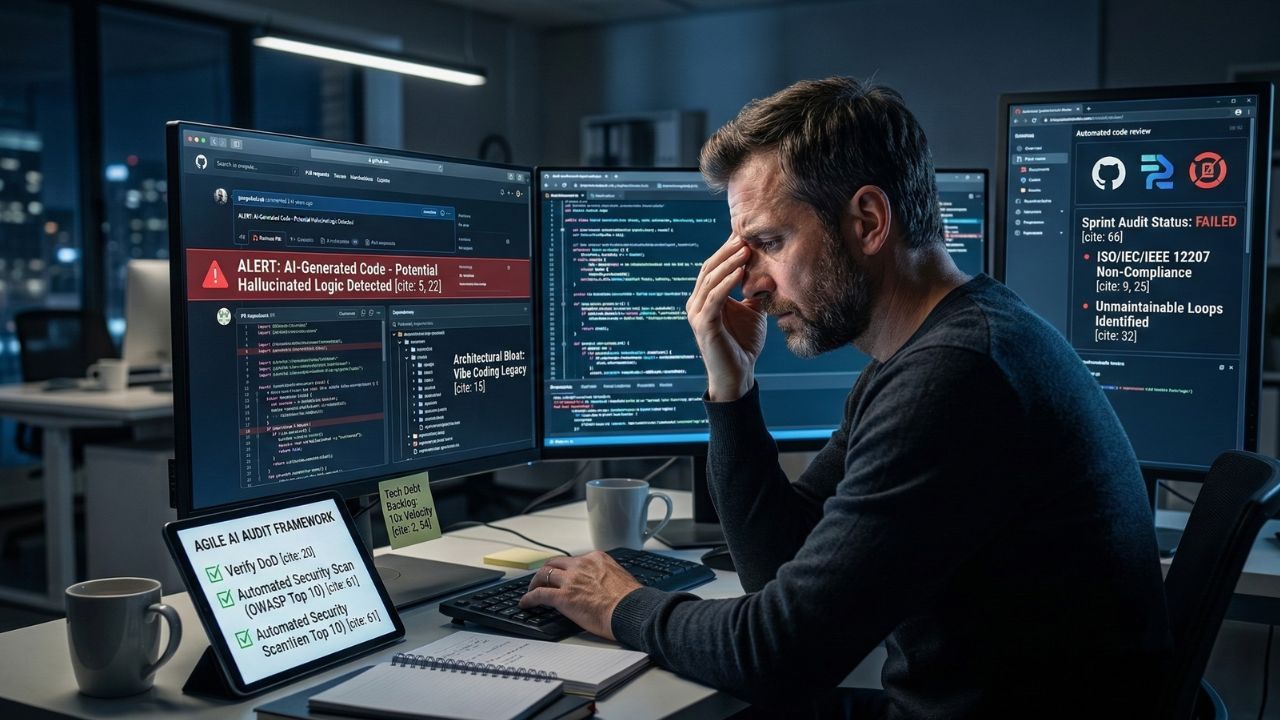

- The Problem: Human reviewers are rubber-stamping AI-generated pull requests out of sheer fatigue.

- The Framework: Implement this Agile checklist to catch errors during active sprints.

- Compliance Target: Enforce ISO/IEC/IEEE 12207 life cycle processes during Scrum sprints.

- The Goal: Stop codebase rot and prevent compounding technical debt from entering production.

AI generates code 10x faster, which means your development team is quietly generating technical debt 10x faster.

Human PR reviews are failing under the crushing volume of this automated output.

As detailed in our master guide on Why "Vibe Coding" Is Destroying Your Codebase, developers operating on autopilot might feel incredibly fast, but vibe coding is killing your codebase.

You must learn how to audit AI-generated code in Scrum sprints before hallucinated logic hits production.

The Hidden Trap: What Most Teams Get Wrong About AI Code Audits

Most organizations believe their existing peer-review process is robust enough to handle LLM-assisted development. This is a critical mistake.

"Vibe coding" feels incredibly fast until you realize your human engineers no longer understand your own architecture.

What most enterprises miss is the compounding nature of AI-generated technical debt when operating outside a Hub & Spoke content model.

When benchmarking GCC performance against global standards, AI-driven productivity metrics often hide severe architectural bloat.

Reviewers become exhausted and stop tracing complex dependencies. Do not let raw output speed blind you to the degradation of your core system reliability.

4 Steps to Audit AI-Generated Code in Scrum Sprints

To prevent your agentic AI from burying you in unmaintainable technical debt, your organization must enforce strict operational boundaries across the entire software development lifecycle.

Here is the exact Agile checklist your team needs to implement.

Step 1: Update the Scrum Definition of Done for AI

Your traditional Definition of Done (DoD) is no longer sufficient for machine-generated speed.

Top-performing Scrum teams must adapt their definition of done to catch hallucinated logic.

Every ticket must require explicit verification of AI-authored components before the code is merged.

Step 2: Enforce Strict Compliance Mapping

Your automated output must meet rigorous global standards. Specifically, you must map your software life cycle processes to ISO/IEC/IEEE 12207.

This ensures that every line of generated code has clear provenance and accountability.

Expert Insight: Failing to maintain a rigid structural architecture results in environments where side-rail dependencies silently fail.

Always prioritize architectural empathy over rapid boilerplate generation.

Step 3: Integrate Autonomous Code Review Tools

Human engineers simply can't keep up with AI output.

You must upgrade your CI/CD pipeline with the best AI agents for autonomous code review 2026 to catch critical security flaws.

These tools are necessary to actually verify what your AI wrote and maintain SOC 2 Type II compliance.

Step 4: Measure Compounding Technical Debt

Autonomous software engineers frequently write unmaintainable loops. During sprint retrospectives, teams must actively track the complexity of LLM-generated logic.

Mastering managing technical debt in the age of Devin and Cline is mandatory for CTOs.

Data Table: Human PR Reviews vs. Autonomous Verification

| Audit Method | Processing Speed | Threat Detection Capabilities | Tech Debt Focus |

|---|---|---|---|

| Human PR Reviews | Slow, bottlenecked by sheer fatigue | Prone to missing hallucinated logic | Varies heavily by developer experience |

| Autonomous AI Agents | Instant, scales effortlessly with output | Aligned with SOC 2 Type II (CC8.1) standards | Consistently tracks unmaintainable loops |

Conclusion: Upgrade Your Review Pipeline Today

If your developers are using AI without a structured Agile auditing framework, your architecture is already quietly rotting.

You need specialized tools to actually verify what your AI wrote. Ready to secure your workflow and eliminate human bottlenecks?

Explore the best AI agents for autonomous code review 2026 revealed in our next breakdown to upgrade your tech stack.

Frequently Asked Questions (FAQ)

The entire Scrum team shares responsibility, but the execution requires a modern approach. Because human PR reviews are failing, teams must rely on senior engineers alongside automated autonomous code review agents to properly verify architecture.

Hallucinations frequently manifest as unmaintainable loops or silent dependency failures. Top-performing Scrum teams must adapt their definition of done to catch hallucinated logic by running strict, specialized architectural audits before merging.

Absolutely. Standard criteria are completely insufficient for the speed of LLMs. Your team must adapt their definition of done to specifically target hallucinated logic and strictly enforce ISO/IEC/IEEE 12207 life cycle processes.

The most effective tools remove the heavy burden from fatigued human developers. You should discover the best AI agents for autonomous code review 2026 to catch critical security flaws directly within your active CI/CD pipeline.

Because AI generates code 10x faster, your development team is quietly generating technical debt 10x faster. To reclaim sprint capacity, teams must automate verification using specialized tools to actually verify what your AI wrote.

Yes, autonomous software engineers write unmaintainable loops without holistic oversight. This rapid boilerplate creation completely sacrifices architectural empathy, resulting in legacy spaghetti code.

Security tests must be embedded directly into the CI/CD pipeline. You must secure your local environment against OWASP Top 10 for LLMs vulnerabilities, specifically targeting LLM02: Insecure Output Handling, through continuous algorithmic verification.

Yes, and it is highly recommended. Because human reviewers are rubber-stamping AI-generated pull requests out of sheer fatigue, specialized autonomous code review agents are needed to actually verify what your AI wrote.

Proper documentation requires establishing clear code provenance. Teams should tag commits to indicate AI generation and enforce ISO/IEC/IEEE 12207 life cycle processes during Scrum sprints to maintain an auditable trail.

Code that fails must be immediately rejected to prevent compounding technical debt. This highlights the necessity of mapping to the NIST AI RMF and enforcing continuous vulnerability management before any merge.