The Cline vs Continue: Truth No IDE Vendor Admits

Executive Snapshot: The Bottom Line

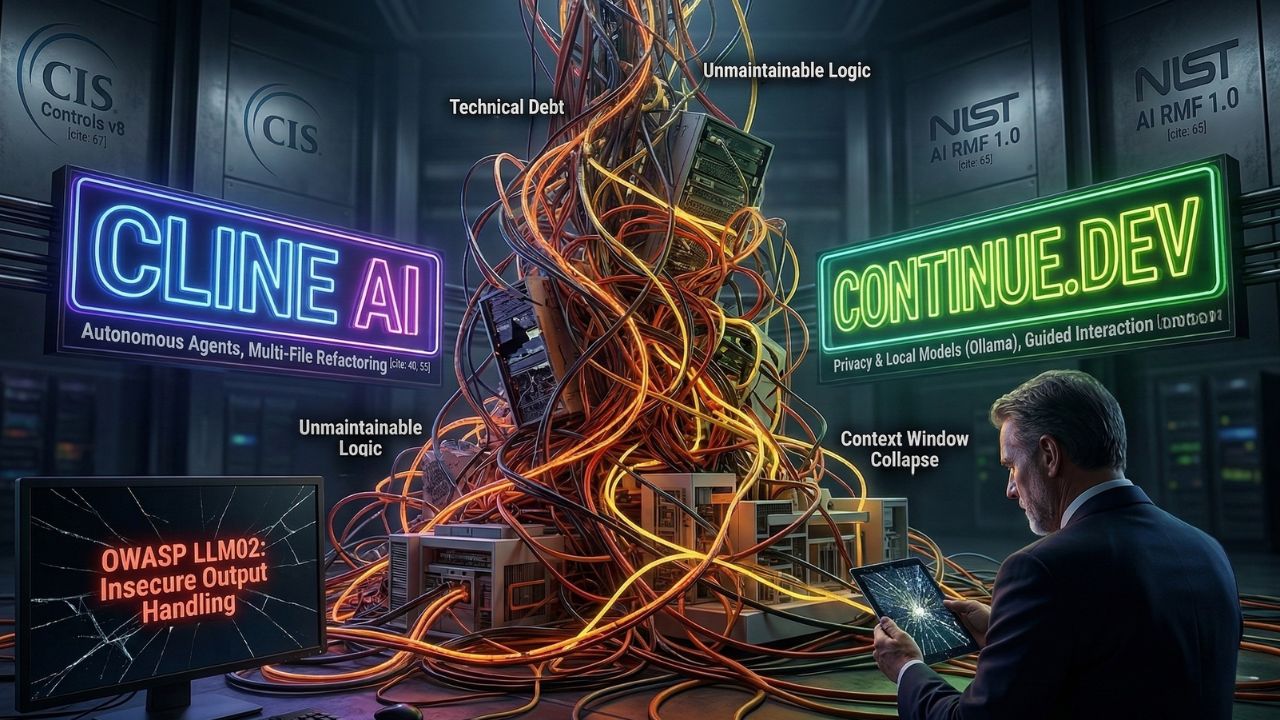

- The Cline vs Continue debate on the best AI coding assistant extension frequently ignores a serious code-leak vulnerability.

- Engineering teams must secure local environments against OWASP Top 10 for LLMs risks, specifically LLM02: Insecure Output Handling.

- Continue excels in privacy, offering robust capabilities for strict corporate proxies by connecting to local models like Ollama.

- Cline offers superior agentic refactoring but requires rigorous protocols to manage API keys securely.

Picking an agentic IDE extension feels like a constant battle between blazing-fast productivity and securing your enterprise data privacy.

Choosing the wrong AI IDE extension means handing over your proprietary business logic to a third-party black box, exposing your entire architecture to immense risk.

The real winner between Cline and Continue comes down entirely to enterprise data governance, meaning you must lock down your stack before moving forward.

As detailed in our master guide on Why "Vibe Coding" Is Destroying Your Codebase, securing the IDE layer is the mandatory first step to prevent your team from writing unmaintainable spaghetti code.

The Core Debate: Replacing Manual Syntax vs Agentic Autonomy

When engineering teams evaluate Cursor alternatives, they often focus solely on generation speed rather than structural safety.

However, the difference between standard autocomplete AI and true agentic coding is profound and requires distinct governance.

Autocomplete suggests line-by-line syntax, whereas agentic coding autonomously executes complex refactoring tasks across multiple files.

While autonomous execution sounds ideal, it dramatically increases the risk of unmaintainable logic if not governed properly.

Relying heavily on these tools to write logic based on superficial prompts is the definition of vibe coding.

Feature Comparison Data

| Feature/Metric | Cline AI | Continue.dev | Governance Impact |

|---|---|---|---|

| Model Hosting | Cloud API dependent | Local and Cloud Support | High Data Privacy |

| Refactoring | Advanced autonomous agents | Strong but developer-guided | High Tech Debt |

| Token Costs | Tied to commercial APIs | Can be zero with local models | Medium Budget |

| Claude 3.5 Support | Supported via API key | Supported out of the box | Low Versatility |

The Hidden Trap: What Most Teams Get Wrong About Agentic IDEs

The biggest misconception about agentic IDE extensions is that raw output speed equates to system health.

Autonomous developers are great at writing boilerplate code, but terrible at long-term architectural empathy.

When you unleash an agent across your repository, you risk context window collapse.

Context window collapse happens when agents lose track of your system architecture, allowing hallucinated logic to hit production.

Expert Pro-Tip: Do not let raw output speed blind you to the degradation of your core system reliability.

Mastering Managing technical debt in the age of Devin and Cline is mandatory for CTOs looking to stop codebase rot.

Governing the Black Box: OWASP LLM02

The debate around the Cline vs Continue: Best AI coding assistant extension must center on output handling protocols.

You must secure your local environment against OWASP Top 10 for LLMs vulnerabilities, specifically LLM02: Insecure Output Handling.

Unreviewed AI output introduces vulnerabilities tied directly to this OWASP standard.

If you blindly accept multi-file refactors from an autonomous agent, you are bypassing crucial change management controls.

This is why learning How to audit AI-generated code in Scrum sprints is a necessary operational boundary.

Conclusion

Selecting the right AI coding assistant is only the first step in protecting your intellectual property.

Once you have locked down your IDE stack, you must establish a rigid auditing pipeline to verify the generated code before it merges.

Because AI generates code rapidly, your development team is quietly generating technical debt at an unprecedented scale.

Frequently Asked Questions (FAQ)

Continue is generally better for strict data privacy. Because it allows you to bypass third-party black boxes and connect directly to local models, your proprietary code never leaves your local machine, significantly reducing the risk of IP leaks.

Code quality depends entirely on the underlying LLM, but autonomous agents in Cline handle complex refactoring exceptionally well. It excels at multi-file architecture changes, whereas Continue is often utilized for more guided, interactive coding sessions.

You can install the Cline AI coding assistant extension directly from your IDE marketplace extension tab. Simply search for the application, click install, and configure your model API credentials securely using your enterprise secrets manager.

Yes, Continue can connect seamlessly to local models like Ollama. This capability makes it the ideal AI extension for environments locked behind a strict corporate proxy, as it requires absolutely no external API calls to function.

Token costs associated with Cline vs Continue depend heavily on your organizational setup. Cline requires paid API access to commercial models, while Continue can be completely free if you route requests through local open-source models.

Yes, Continue supports Claude 3.5 Sonnet out of the box. You simply need to provide your valid Anthropic API keys to authenticate the connection and begin leveraging its advanced reasoning capabilities within your editor.

Autonomous agents in Cline handle complex refactoring by reading your directory structure, understanding file relationships, and autonomously executing multi-file edits rather than just suggesting isolated, line-by-line syntax improvements.

Yes, Continue is a completely open-source AI coding assistant. This level of transparency is a massive benefit for enterprise security teams who are required to audit their IDE tooling for internal compliance and governance standards.

To manage API keys securely in Cline, never hardcode them into your workspace files. Rely strictly on your operating system secure environment variables or a dedicated enterprise secrets manager to prevent accidental credential leaks.

Because it can operate entirely offline with open-source models like Ollama, Continue is the absolute best AI extension for environments restricted behind a strict corporate proxy, ensuring no outbound traffic violations occur.

Sources & References

- National Institute of Standards and Technology (NIST): Artificial Intelligence Risk Management Framework (AI RMF 1.0)

- OWASP Top 10 for LLMs: LLM02: Insecure Output Handling

- Center for Internet Security (CIS): CIS Controls v8

- Why "Vibe Coding" Is Destroying Your Codebase

- Managing technical debt in the age of Devin and Cline

- How to audit AI-generated code in Scrum sprints

External Sources

Internal Sources