Cheapest AI PC Laptops 2026: 5 Rules to Avoid Crippled Hardware

What's New in This Update

- Updated 2026 Price Floors: Validated current entry-level pricing for Snapdragon X Plus and budget AMD Ryzen AI 300 models.

- Unified Memory Math: Added specific VRAM calculations for running 7B and 8B open-source models natively.

- Thermal Reality Checks: Included new data on how budget plastic chassis impact sustained Neural Processing Unit (NPU) performance.

Executive Snapshot: The Bottom Line

- The Baseline Reality: Do not deploy any budget machine boasting less than 40 TOPS; it will fail modern Copilot+ workloads.

- The Architecture Mandate: Ensure the NPU is fully integrated into the silicon (System on Chip) for essential battery efficiency.

- The Software Optimizer: You must audit the software layer; utilizing optimized tools maximizes the performance of entry-level hardware.

Procurement teams and students are increasingly tempted by entry-level AI hardware to minimize capital expenditure during technology refresh cycles. However, blindly deploying the cheapest AI PC laptops without auditing their silicon architectures often results in bottlenecked hardware that destroys workforce productivity.

By utilizing a rigorous evaluation framework, you can aggressively cut costs without purchasing crippled, obsolete machines. As detailed in our master guide on navigating the AI laptop market, navigating the low-end market requires ignoring flashy marketing stickers and focusing strictly on sustained neural processing capabilities.

The 5-Step Framework to Audit Entry-Level AI Hardware

Acquiring budget processors requires a defense-in-depth approach to hardware evaluation. When Original Equipment Manufacturers (OEMs) cut costs to produce the cheapest AI PC laptops, they typically compromise on thermal management and memory bandwidth. Follow these five steps to ensure your budget machines actually perform when tasked with generating local content.

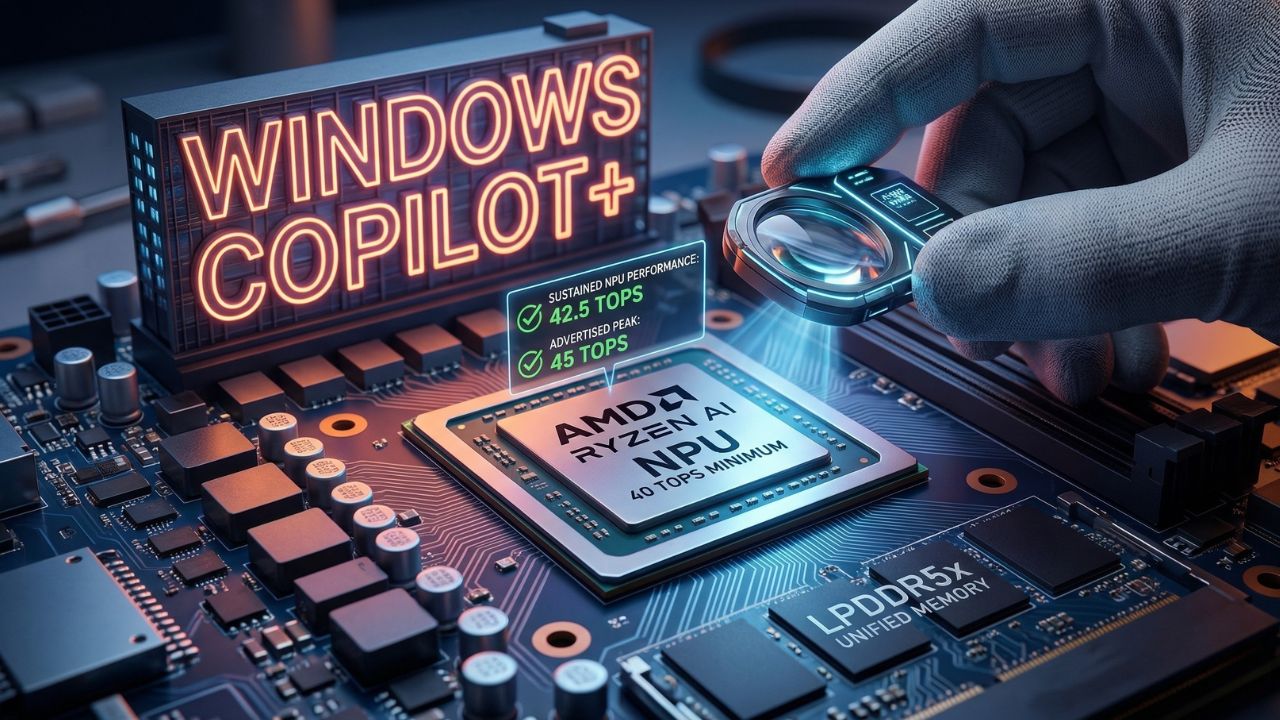

Step 1: Enforce the 40 TOPS Minimum Floor

In 2026, 40 TOPS (Trillions of Operations Per Second) is the absolute entry fee for the Microsoft Copilot+ ecosystem. Many older or severely budget-restricted machines utilize processors outputting a meager 10 to 15 TOPS. These machines cannot run local Windows AI features natively; they will immediately offload basic tasks to the cloud, introducing severe latency and completely negating the privacy benefits of an AI PC.

Step 2: Validate System-on-Chip (SoC) Integration

Do not purchase entry-level hardware that relies on discrete entry-level GPUs for basic inference. You must ensure the NPU is integrated directly into the silicon as a System on Chip. This integrated architecture processes AI inferencing at a mere fraction of the wattage required by a dedicated graphics card, offering superior battery life for mobile workforces. Understanding what an NPU is and why 45+ TOPS mattersis critical before signing a purchase order.

Step 3: Check for "Thermal Throttling" Vulnerabilities

Budget chassis are notorious for failing to cool the NPU during heavy inferencing tasks. Unlike a central processor that bursts to high speeds for seconds at a time, an NPU running a local Large Language Model (LLM) generates sustained heat via constant matrix multiplications. If the cooling solution relies on a single shared heat pipe or cheap plastic casing, the NPU will rapidly hit its thermal ceiling and throttle. It will then dump the workload back onto the CPU, causing catastrophic system-wide lag.

Step 4: Demand LPDDR5x Unified Memory

A fast NPU is entirely useless if it is starved of data. When evaluating the cheapest AI PC laptops, you must look for high-bandwidth LPDDR5x unified memory. This architecture eliminates the severe bottleneck created when data must be painstakingly moved between standard system RAM and a separate VRAM pool.

If you intend to run models locally, you must calculate the minimum RAM and VRAM requirements. A standard 7B parameter open-source model running at 4-bit quantization requires approximately 4.5GB to 5GB of RAM purely to sit in memory. If your laptop only has 8GB total, your operating system will crash. 16GB of unified memory is the non-negotiable minimum for 2026, while 32GB is strongly recommended for developers.

Step 5: Leverage Quantized Open-Source Models

Hardware is only half the battle. To make cheap hardware work harder, individuals and IT teams must deploy the right software stack. Using the best open source tools for running local LLMsensures you maximize the performance of entry-level hardware by utilizing highly optimized, quantized models (like Llama 3 8B or DeepSeek R1 7B) that execute efficiently on budget NPUs.

The Hidden Trap: Sustained vs. Peak TOPS

The most catastrophic mistake enterprise procurement teams make is evaluating budget laptops based solely on "Peak TOPS" marketing stickers rather than "Sustained TOPS." Manufacturers heavily advertise peak speeds—a metric the machine can physically only maintain for a few seconds before hitting thermal limits.

Once a poorly cooled budget PC hits this limit, the NPU throttles down significantly to prevent hardware damage. A machine advertised at 45 TOPS might realistically sustain only 15 TOPS during a 30-minute localized coding session or data analysis run, rendering it effectively useless for continuous enterprise tasks.

Expert Insight & Pro-Tip: When purchasing cheap AI laptops in bulk, always request a "Sustained Thermal Design Power (TDP)" benchmark from your OEM representative. If the vendor refuses to provide sustained NPU wattage data over a 60-minute load, instantly disqualify that hardware tier.

Budget Silicon Breakdown (2026 Edition)

To safely navigate the $700–$900 price bracket, you must understand the specific silicon powering these devices. Here is how the primary budget architectures compare for local inference:

| Hardware Tier / Processor | Memory Architecture | Expected Sustained Performance | Verdict for Budget Buyers |

|---|---|---|---|

| Qualcomm Snapdragon X Plus | LPDDR5x (Unified) | ~40 TOPS (High Efficiency) | Excellent: Best battery life in class. Perfect for students and standard enterprise Copilot+ tasks. |

| AMD Ryzen AI 300 (Base SKUs) | LPDDR5x (High Freq) | ~45 TOPS | Ideal: Strong multi-core CPU performance pairs well with the NPU for mixed developer workloads. |

| Intel Core Ultra Series 1 (Meteor Lake) | DDR5 (Standard) | < 15 TOPS | Avoid: Fails the 2026 Copilot+ 40 TOPS requirement. Prone to severe bottlenecking on local models. |

Enterprise ROI: Cloud APIs vs. Budget Local Hardware

Why bother buying a specialized laptop when you can just pay for a ChatGPT Plus subscription? The answer lies in the unit economics of scaling artificial intelligence. Relying on cloud APIs or SaaS subscriptions creates a perpetual, metered drain on operational budgets.

A standard $20/month subscription costs $960 over a standard four-year laptop lifespan—more than the cost of the laptop itself. If an enterprise scales this to 500 employees, the numbers become staggering. By investing upfront in capable, NPU-enabled hardware, you eliminate this recurring subscription debt. A thorough analysis of the cost of running LLMs locally versus the cloudproves that budget Copilot+ PCs achieve ROI within 14 months for daily AI users.

Conclusion: Value Without Compromise

Procuring the cheapest AI PC laptops doesn't have to mean compromising your workforce's capabilities or accepting planned obsolescence. By rigorously enforcing the 40 TOPS minimum, demanding unified memory, and verifying thermal chassis integrity, you can secure cost-effective hardware that actively drives productivity rather than hindering it.

Focus on the silicon architecture, ignore the marketing stickers, and ensure you are buying hardware capable of sustained inference. Return to our master guide to ensure your organizational procurement framework is fully aligned with 2026 local AI standards.

Frequently Asked Questions (FAQ)

Entry-level AI laptops meeting the strict 40 TOPS Copilot+ certification generally start around the $700 to $850 mark. Models priced significantly lower than this usually feature older, crippled NPUs (like Intel's first-generation Meteor Lake) that cannot efficiently process modern localized generative AI tasks.

Yes, but only if they meet the minimum baseline of an integrated NPU (SoC) and at least 16GB of LPDDR5x unified memory. If a cheap laptop compromises on these two factors, it will fail to run local models efficiently, experience severe memory bottlenecks, and ultimately act as a poor investment.

Budget models utilizing the latest generation of mid-tier AMD Ryzen AI 300 or Intel Core Ultra Series 2 processors typically max out exactly at the 40 to 48 TOPS requirement. This offers the highest NPU performance necessary for Windows integration before jumping into premium enterprise pricing tiers.

A budget AI PC can run smaller, heavily quantized open-source models (like Llama 3 8B or Mistral) efficiently, provided it features high-bandwidth LPDDR5x memory. However, they will struggle and likely crash if tasked with massive, non-quantized developer models that demand 64GB+ of VRAM.

To cut costs while retaining expensive silicon, manufacturers typically compromise on thermal chassis cooling, display color accuracy, and overall build materials (opting for plastic over aluminum). The lack of proper NPU cooling is the most critical compromise, as it directly leads to thermal throttling during sustained workloads.

The AI Chromebook market is expanding, focusing heavily on cloud-based AI tools integrated directly into Google Workspace. However, for strictly local, on-device AI inference without an internet connection, Windows-based Copilot+ PCs or entry-level M-series MacBooks currently offer vastly superior localized software ecosystems.

Both companies aggressively target the entry-level market. Budget AMD Ryzen AI chips frequently offer superior integrated graphics performance alongside their NPUs, which is excellent for creative tasks. Intel's budget Core Ultra line often excels in raw CPU single-core speed and deep legacy software compatibility.

For strictly AI tasks, a cheap new laptop is vastly superior. Used premium laptops from 2023 or earlier entirely lack the modern, high-TOPS NPUs required to process localized AI efficiently. An older premium CPU will instantly overheat and drain its battery attempting matrix multiplications that a new budget NPU handles flawlessly.

Lenovo and Asus consistently provide exceptional value in the budget AI sector. Their entry-level enterprise (ThinkPad) and student (IdeaPad/Vivobook) lines frequently feature certified 40 TOPS processors while maintaining adequate baseline cooling, avoiding the severe throttling seen in some ultra-cheap alternatives.

If you strictly adhere to the 40 TOPS and 16GB unified memory baseline, a budget AI PC should comfortably last a standard 3-to-4-year corporate refresh cycle before localized software demands (like running 14B parameter models natively) completely outpace the entry-level silicon architectures.

Sources & References

- International Data Corporation (IDC): IDC FutureScape AI Predictions & Reports

- Forrester Research: "The Total Economic Impact of Entry-Level Copilot+ Devices in B2B Environments"

- IEEE Computer Society: "Thermal Dynamics and Memory Constraints in Low-Cost Edge AI Silicon"

- Don't Buy an AI Laptop Before Reading This NPU Secret

External Sources

Internal Sources